When I read about

ORNL Titan becoming the fastest supercomputer in November 2012, I thought that the real question is not how to get from ~20 petaflops to ~2 exaflops, but how to get from ~2 exaflops to ~200 exaflops. I think that the hardest part is in front of us, not behind us. Because the path to

ANL Aurora is relatively straightforward. You increase the number of GPUs by 4x, increase power draw by 6x, increase GPU single core fp64 performance by 5x (mainly by improving fp64 to fp32 ratio) and increase the number of cores by also 5x. I don't think that another 100x will go as easily as that.

#1 When your fp64=fp32 you can't improve the ratio further (this is the case with the newest AMD Instinct GPUs)

#2 When your power draw is between 50 to 60 megawatts, you can't really scale it up anymore (unless you go to insane levels)

#3 When your frequency is around 2 GHz, you can't increase it much further without worsening power efficiency (that is why Epyc CPUs in

Frontier are clocked so low)

#4 Silicon scaling (Moore's Law) is becoming increasingly costly below 12nm, cost per transistor is not falling down significantly anymore (that is why Aurora won't even have 8x Titan's storage and even 20x Titan's memory even though the computer will be around 5x costlier)

#5 You start running into

Amdahl's Law problems in increasing real-world performance and not only theoretical performance (for real performance look up HPCG benchmark)

So I am very curious how they will scale supercomputers

after Aurora. I am not surprised they can manage 2 exaflops. I will be surprised if they can manage 200 exaflops. That will be something. I can see possibly 10 exaflops (all in fp64) by increasing total core count by 5x, but what after that? Things become very hazy and vague after 10 exaflops.

In my opinion (same since 2012), scaling beyond 10 exaflops requires completely new ideas and completely new ways of increasing performance, because old ideas just won't work anymore. They simply won't bring what's needed. For example true 3D architectures could be what ushers a new era in performance scaling. I don't mean just stacking up some extra cache, but true 3D processors, that are designed and produced in all three dimensions, possibly with

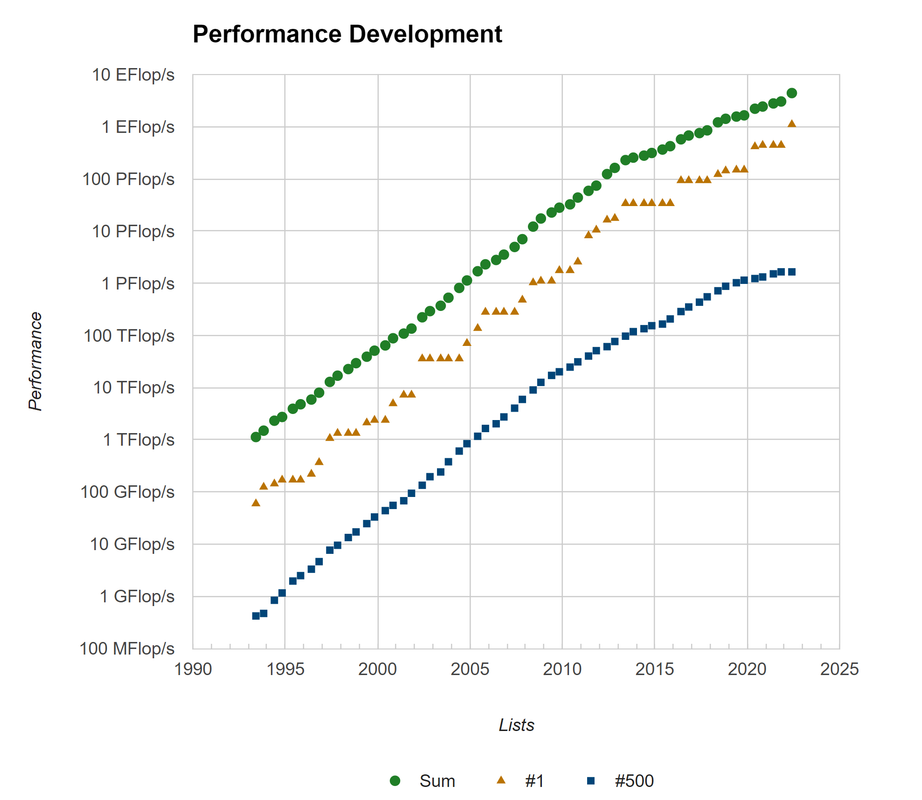

memristors instead of transistors. More than just improving CPU performance per clock by 2x and increasing core count by 5x. I will keep watching how supercomputers evolve, but what I see is that #500 improves more slowly than #1 and this means that the total impact will not be as great as some had hoped.

Global economy doubles in product every 15-20 years. Computer performance at a constant price doubles nowadays every 4 years on average. Livestock-as-food will globally stop being a thing by ~2050 (precision fermentation and more). Human stupidity, pride and depravity are the biggest problems of our world.