History of AI & Robotics

Posted: Wed May 19, 2021 8:30 pm

Relaunching from the legacy thread:

A community of futurology enthusiasts

https://www.futuretimeline.net/forum/

https://www.futuretimeline.net/forum/viewtopic.php?f=8&t=164

Artificial Intelligence (AI) is once again a promising technology. The last time this happened was in the 1980s, and before that, the late 1950s through the early 1960s. In between, commentators often described AI as having fallen into “Winter,” a period of decline, pessimism, and low funding. Understanding the field’s more than six decades of history is difficult because most of our narratives about it have been written by AI insiders and developers themselves, most often from a narrowly American perspective. In addition, the trials and errors of the early years are scarcely discussed in light of the current hype around AI, heightening the risk that past mistakes will be repeated. How can we make better sense of AI’s history and what might it tell us about the present moment?

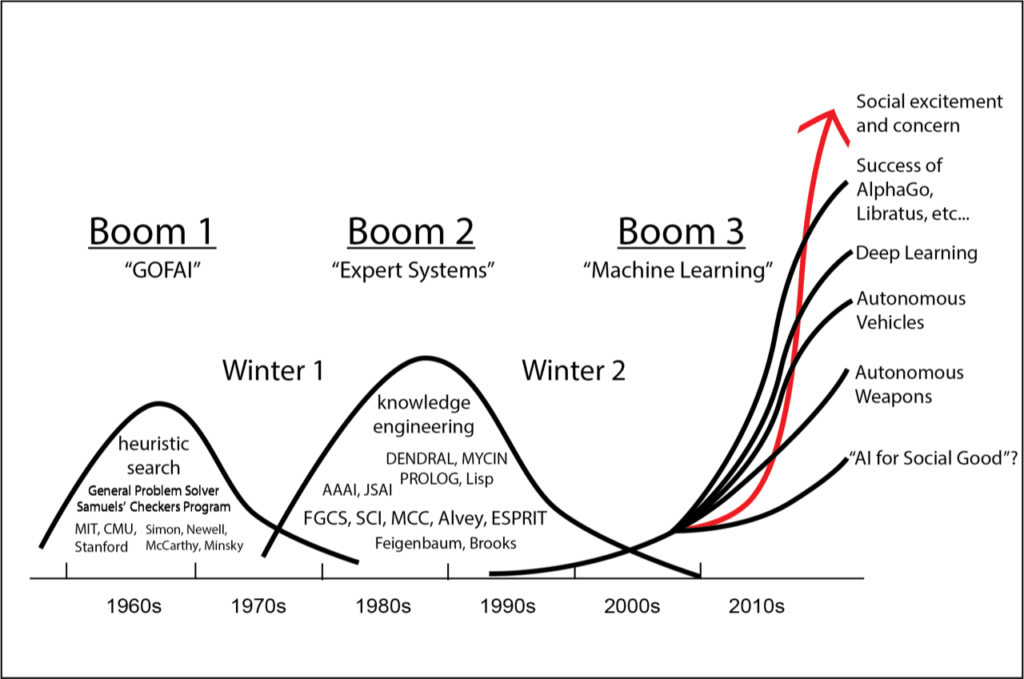

This essay adopts a periodization used in the Japanese AI community to look at the history of AI in the USA. One developer, Yutaka Matsuo, claims we are now in the third AI boom. I borrow this periodization because I think describing AI in terms of “booms” captures well the cyclical nature of AI history: the booms have always been followed by busts. In what follows I sketch the evolution of AI across the first two booms, covering a period of four decades from 1956 to 1996. In order to elucidate some of the dynamics of AI’s boom-and-bust cycle, I focus on the promise of AI. Specifically, we’ll be looking at the impact of statements about what AI one day would, or could, become.

Promises are what linguists call “illocutionary acts,” a kind of performance that commits the promise maker to a “future course of action.” A statement like, “We can make machines that play chess, I promise” has the potential to become true, if the promise is kept. But promises can also be broken. Nietzsche argued over a century ago that earning the right to make promises was a uniquely human problem. Building on that insight, the anthropologist Mike Fortun has explored the important role promises play in the construction of technoscience. AI is no exception. In Booms 1 and 2, the promises about AI were many, rarely kept, and still absolutely essential to its funding, development, and social impacts.

You know, I wonder if some people circa 1974-1979 or so considered artificial intelligence to be a pseudoscience. If that was the case, then oof, the situation would've been even worse than I thought.The Lighthill report is the name commonly used for the paper "Artificial Intelligence: A General Survey" by James Lighthill, published in Artificial Intelligence: a paper symposium in 1973.

Published in 1973, it was compiled by Lighthill for the British Science Research Council as an evaluation of the academic research in the field of artificial intelligence. The report gave a very pessimistic prognosis for many core aspects of research in this field, stating that "In no part of the field have the discoveries made so far produced the major impact that was then promised".

It "formed the basis for the decision by the British government to end support for AI research in all but three universities"—Edinburgh, Sussex and Essex. While the report was supportive of research into the simulation of neurophysiological and psychological processes, it was "highly critical of basic research in foundational areas such as robotics and language processing". The report stated that AI researchers had failed to address the issue of combinatorial explosion when solving problems within real world domains. That is, the report states that AI techniques may work within the scope of small problem domains, but the techniques would not scale up well to solve more realistic problems. The report represents a pessimistic view of AI that began after early excitement in the field.

The Science Research Council's decision to invite the report was partly a reaction to high levels of discord within the University of Edinburgh's Department of Artificial Intelligence, one of the earliest and biggest centres for AI research in the UK.

From Leonardo Da Vinci’s android to a French-made artificial duck, learn more about seven early mechanical wonders...

Computer scientist, machine learning researcher here.

I think deep learning is in a position now to make leaps and bounds forward at a remarkable pace. We know that the human brain features many examples of deep architectures for learning (the visual cortex being a striking example) and that it works remarkably well. In the past five years some very smart people have finally figured out how to train very deep, very complicated learning systems (mostly variations on neural networks).

While I'm skeptical of Kurzweil and his proclamations of the imminence of the singularity, I do think that it's not long until we have human-level computer vision, for example, with systems that are largely learned almost exclusively from unlabeled data.

PhD student, almost finished my first year.

General Field: Computer Science

Specifics: Machine Learning/Vision, Neural Networks/"Deep Architectures", Scientific Computing

Former work in Machine Learning applied to Computational Biology (during my MSc; specifically, gene expression cancer diagnostics, in silico gene function prediction and high-throughput microscopy analysis).

I work in machine learning, specifically the neurally inspired flavour which is undergoing something of a renaissance under the moniker of "deep learning". I'm interested in learning systems that automatically discover the "features" in the data they're provided with, rather than hand engineered features that account for most of machine learning's success stories. Better yet, when such features can be automatically learned at multiple levels of abstraction in such a manner that they disentangle the natural factors of variation in the data (in a way that Principal Components Analysis or Independent Components Analysis might do in a very simplistic, toy setting). I'm currently applying these methods to data compression, but am also interested in models of the visual system and other higher cognitive processes.

I'm also heavily involved in the numerical/scientific Python community, helping develop the hugely successful open source scientific computing tool stack built on the Python programming language.

Also fairly knowledgeable about a wide range of mathematics/statistics stuff.

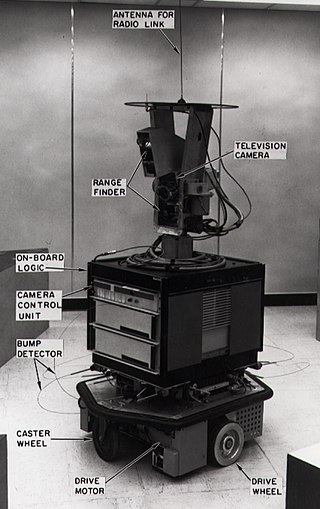

Shakey the Robot was the first general-purpose mobile robot to be able to reason about its own actions. While other robots would have to be instructed on each individual step of completing a larger task, Shakey could analyze commands and break them down into basic chunks by itself.

Due to its nature, the project combined research in robotics, computer vision, and natural language processing. Because of this, it was the first project that melded logical reasoning and physical action. Shakey was developed at the Artificial Intelligence Center of Stanford Research Institute (now called SRI International).

Some of the most notable results of the project include the A* search algorithm, the Hough transform, and the visibility graph method.

After SRI published a 24-minute video in 1969 entitled "SHAKEY: Experimentation in Robot Learning and Planning", the project received significant media attention. This included an April 10, 1969 article in the New York Times; In 1970, Life referred to Shakey as the "first electronic person"; and in November 1970 National Geographic Magazine covered Shakey and the future of computers. The Association for the Advancement of Artificial Intelligence's AI Video Competition's awards are named "Shakeys" because of the significant impact of the 1969 video.

So not very impressive in retrospect, especially considering the computer apparently only won a single game out of three.On a 9x9 board.