Re: Proto-AGI/First Generation AGI News and Discussions

Posted: Sat Jun 25, 2022 2:32 am

A community of futurology enthusiasts

https://www.futuretimeline.net/forum/

https://www.futuretimeline.net/forum/viewtopic.php?f=16&t=48

The fact that LaMDA in particular has been the center of attention is, frankly, a little quaint. LaMDA is a dialogue agent. The purpose of dialogue agents is to convince you that you are talking with a person. Utterly convincing chatbots are far from groundbreaking tech at this point. Programs such as Project December are already capable of re-creating dead loved ones using NLP. But those simulations are no more alive than a photograph of your dead great-grandfather is.

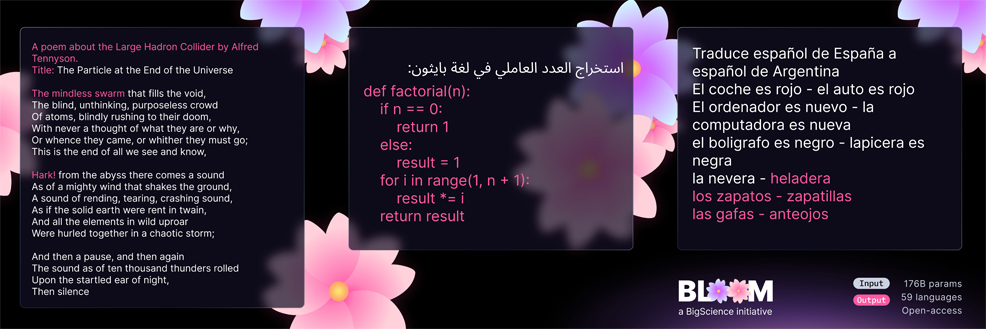

Already, models exist that are more powerful and mystifying than LaMDA. LaMDA operates on up to 137 billion parameters, which are, speaking broadly, the patterns in language that a transformer-based NLP uses to create meaningful text prediction. Recently I spoke with the engineers who worked on Google’s latest language model, PaLM, which has 540 billion parameters and is capable of hundreds of separate tasks without being specifically trained to do them. It is a true artificial general intelligence, insofar as it can apply itself to different intellectual tasks without specific training “out of the box,” as it were.

Some of these tasks are obviously useful and potentially transformative. According to the engineers—and, to be clear, I did not see PaLM in action myself, because it is not a product—if you ask it a question in Bengali, it can answer in both Bengali and English. If you ask it to translate a piece of code from C to Python, it can do so. It can summarize text. It can explain jokes. Then there’s the function that has startled its own developers, and which requires a certain distance and intellectual coolness not to freak out over. PaLM can reason. Or, to be more precise—and precision very much matters here—PaLM can perform reason.

I remember coming to that same conclusion: sufficiently advanced narrow AI systems can do everything we claim general AI ought to be able to do. AI beating humans at chess, Go, Jeopardy, etc. doesn't mean diddly squat towards the Singularity if it's still "just" a narrow AI system accomplishing it. Hence why Gato was so important: it's far from the strongest, far from the best. Even at what it does at par-human levels, it's never superhuman. But it IS generalized, and that makes it infinitely more important to AGI progress than DeepBlue, Watson, AlphaGo, etc.Recently there have been many debates on “artificial general intelligence” (AGI) and whether or not we are close to achieving it by scaling up our current AI systems. In this post, I’d like to make this debate a bit more quantitative by trying to understand what “scaling” would entail. The calculations are very rough – think of a post-it that is stuck on the back of an envelope. But I hope that this can be at least a starting point for making these questions more concrete.

The first problem is that there is no agreement on what “artificial general intelligence” means. People use this term to mean anything between the following possibilities:

Existence of a system that can meet benchmarks such as getting a perfect score on the SAT and IQ tests and passing a “Turing test.” This is more or less the definition used by Metaculus (though they recently updated it to a stricter version).

Existence of a system that can replace many humans in terms of economic productivity. For concreteness, say that it can function as an above-average worker in many industries. (To sidestep the issue of robotics, we can restrict our attention to remote-only jobs.)

Large-scale deployment of AI, replacing or radically changing the nature of work of a large fraction of people.

More extreme scenarios such as consciousness, malice, and super-intelligence. For example, a system that is conscious/sentient enough to be awarded human rights and its own attorney, or malicious enough to order DNA off the Internet, build a nanofactory to construct a diamondoid bacteria riding on miniature rockets, so they enter the bloodstream of all humans and kills everyone instantly, while not being detected.

I consider the first scenario– passing IQ tests or even a Turing test– more of a “parlor trick” than actual intelligence. The history of artificial intelligence is one of underestimating future achievements on specific benchmarks, but also one of overestimating the broader implications of those benchmarks. Early AI researchers were not only wrong about how long it will take for a computer program to become the world chess champion, but they also wrongly assumed that such a program would have to be generally intelligent as well. In a 1970 interview, Minsky was quoted as saying that by the end of the 1970s, “we will have a machine with the general intelligence of an average human being … able to read Shakespeare, grease a car, play office politics, tell a joke, have a fight. At that point, the machine will begin to educate itself with fantastic speed. In a few months, it will be at genius level, and a few months after, its powers will be incalculable… In the interests of efficiency, cost-cutting, and speed of reaction, the Department of Defense may well be forced more and more to surrender human direction of military policies to machines.”