21st November 2014 AI software can identify objects in photos and videos at near-human levels A new AI software program developed by researchers at Google and Stanford University can recognise objects in photos and videos at near-human levels of understanding.

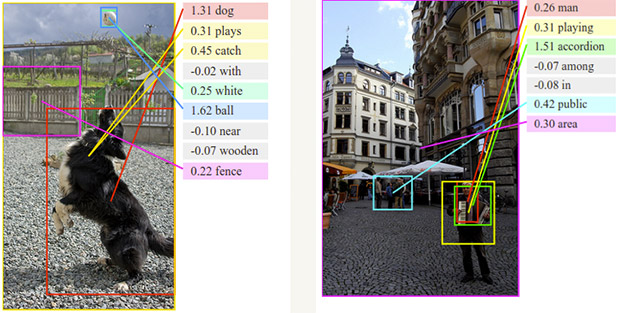

It was only recently that computer systems became smart enough to identify unknown objects in photographs. Even then, it has generally been limited to individual objects. Now, two separate teams of researchers at Google and Stanford University have created software able to describe entire scenes. This could lead to much better and more intelligent algorithms in the future. Stanford's work, entitled "Deep Visual-Semantic Alignments for Generating Image Descriptions", explains how specific details found in photographs and videos can be translated into written text. Google's version of the technology, in a study titled "Show and Tell: A Neural Image Caption Generator", produced similar results. While each team used a slightly different approach, they both combined deep convolutional neural networks with recurrent neural networks that excel at text analysis and natural language processing. The programs were able to "learn" from each new interaction, with algorithms enabling the system to improve its accuracy by scanning scene after scene, looking for patterns, and then using the accumulation of previously described scenes to extrapolate what is being depicted in the next unknown image.

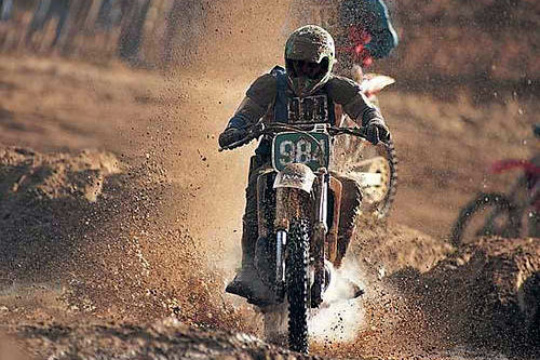

"The system can analyse an unknown image and explain it in words and phrases that make sense," says Fei-Fei Li, a professor of computer science and director of the Stanford Artificial Intelligence Lab. "This is an important milestone. It's the first time we've had a computer vision system that could tell a basic story about an unknown image by identifying discrete objects and also putting them into some context." These latest algorithms are being trained on a visual dictionary – the ImageNet project – with a database of more than 14 million objects. Each object is described by a mathematical term, or vector, that enables the machine to recognise the shape the next time it is encountered. Those mathematical definitions are linked to the words humans would use to describe the objects. “I was amazed that even with the small amount of training data that we were able to do so well,” said Oriol Vinyals, a Google computer scientist who worked with members of the Google Brain project. “The field is just starting, and we will see a lot of increases.” In the near term, computer vision systems that can discern the story in a picture will enable people to search photo or video archives and find highly specific images. Eventually, these advances will lead to robotic systems able to navigate unknown situations. Driverless cars would also be made safer. However, it also raises the prospect of even greater levels of government surveillance.

Comments »

|