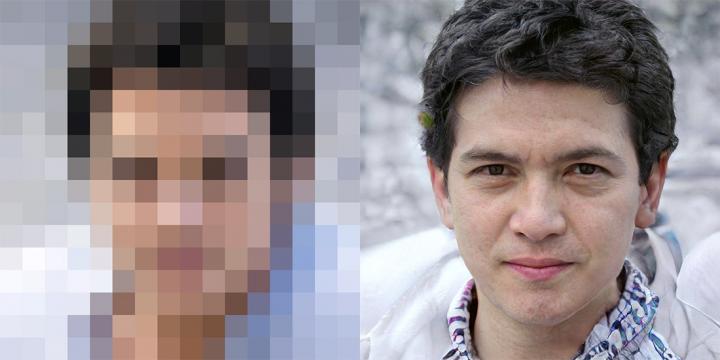

14th June 2020 AI makes blurry faces look 64 times sharper Researchers at Duke University, North Carolina, have developed a new AI tool that can turn blurry, unrecognisable images of people's faces into eerily convincing computer-generated portraits, in finer detail than ever before.

Previous methods have been capable of scaling images up to eight times their original resolution. But the Duke team's algorithm creates realistic-looking faces with up to 64 times the resolution – "imagining" missing features such as fine lines, eyelashes, and stubble – based on just a handful of pixels. "Never have super-resolution images been created at this resolution before with this much detail," said Duke computer scientist Cynthia Rudin, who led the team. The system cannot be used to identify people, the researchers say. For example, it won't turn an out-of-focus, unrecognisable photo from a security camera into a crystal-clear image of a real person. Rather, it can generate new faces that do not exist, but look plausibly real. While the researchers focused on faces as a proof of concept, the same technique could in theory take low-res shots of almost anything and create sharp, realistic-looking pictures. This would have many potential applications – ranging from medicine and microscopy to astronomy and satellite imagery, according to study co-author Sachit Menon, who just graduated from Duke with a double-major in mathematics and computer science. The researchers are presenting their method – called Photo Upsampling via Latent Space Exploration (PULSE) – at the 2020 Conference on Computer Vision and Pattern Recognition, held virtually from 14th June to 19th June.

Traditional approaches take a low-resolution image and "guess" what extra pixels are needed by trying to get them to match, on average, with corresponding pixels in high-resolution images the computer has seen before. As a result of this averaging, textured areas in hair and skin that might not line up perfectly from one pixel to the next end up looking fuzzy and indistinct. The Duke team came up with a different approach. Instead of taking a low-resolution image and slowly adding new detail, the system scours AI-generated examples of high-resolution faces, searching for ones that look as much as possible like the input image when shrunk down to the same size. The team used a machine learning tool called a generative adversarial network (GAN), in which two neural networks are trained on the same data set of photos and work with each other to produce the best image. One network comes up with AI-created human faces that mimic the ones it was trained on, while the other takes this output and decides if it is convincing enough to be mistaken for the real thing. The first network gets better and better with experience, until the second network is unable to tell the difference. PULSE can create realistic-looking images from noisy, poor-quality input that other methods can't, explained Rudin. From a single blurred image of a face it can spit out any number of uncannily lifelike possibilities, each of which looks subtly like a different person. Even given pixelated photos, where the eyes and mouth are barely recognisable, "our algorithm still manages to do something with it, which is something that traditional approaches can't do," said co-author Alex Damian. The system can convert a 16 x 16-pixel image to 1024 x 1024 pixels in a few seconds, adding more than a million pixels, i.e. HD resolution. Details such as pores, wrinkles, and wisps of hair that are imperceptible in the low-res thumbnail become crisp and clear in the newly-generated version. The researchers asked 40 people to rate 1,440 images generated via PULSE and five other scaling methods on a scale of one to five, and PULSE did the best, scoring almost as well as high-quality photos of actual people.

Comments »

If you enjoyed this article, please consider sharing it:

|