|

|

|

|

|

|

2025-2050

Technological unemployment is rising rapidly

The second quarter of the 21st century is marked by a rapid rise in technological unemployment around much of the world. This results in considerable economic, political, social, and cultural upheaval. For most of the 200 years since the Industrial Revolution, new technological advances had tended to create more jobs than they destroyed. By the early 21st century, however, a fundamental change had begun to occur.

Median wages, already stagnating for decades, remained largely flat – especially in developed nations. Previously, globalisation and the outsourcing of jobs to markets with lower wages had been a primary driver of unemployment in high-income countries. But from the late 2020s onward, a combination of automation, artificial intelligence, and robotics is becoming a far more significant contributor to job displacement.

Whereas automation initially threatened mostly blue-collar workers in manufacturing and routine physical tasks, rapid advances in so-called generative AI and large language models (LLMs) begin to profoundly disrupt white-collar professions during this period. Fields like copywriting, journalism, software development, graphic and web design, accounting, customer support, and even certain legal services are impacted by significant job losses – particularly in entry-level roles.

Previously, some mainstream institutions had forecast a net positive impact of AI and automation on employment. However, these projections increasingly appear out of step with real-world trends. Ongoing, exponential improvements in LLMs, generative AI, and robotics, leads many experts to predict far higher levels of disruption than previously assumed. Sophisticated AI models, now entering widespread adoption, can automate tasks once believed to require human creativity and judgement.** Some of these systems are becoming increasingly "agentic" – able to operate autonomously over long time horizons, pursue goals, and take actions without continuous human oversight. OpenAI's Deep Research tool, for example, allows an AI to independently explore topics, gather evidence, and produce extended written outputs with minimal human prompting.*

An even greater inflection point appears imminent, with artificial general intelligence (AGI) moving closer to reality.* Unlike earlier tools limited to narrow uses, AGI has the potential to accomplish a broad spectrum of tasks at or beyond human level – not just augmenting labour, but replacing it across many domains. From strategic planning and scientific research to real-time decision-making and complex problem-solving, AGI threatens to make large segments of the knowledge economy redundant in a short timeframe.

Despite earlier optimistic predictions made in the 2010s, self-driving vehicles have yet to see mass rollout in transportation by 2025. Autonomous taxis, trucks, and delivery vehicles do exist, particularly in controlled environments or specific urban routes, but more widespread adoption remains limited due to persistent safety concerns, unpredictable edge-case scenarios, and regulatory complexities. Between 2030 and 2040, however, substantial improvements in AI perception, real-time decision-making, and infrastructure standardisation enable broader deployment of autonomous transport.** Smaller road vehicles such as taxis, delivery vans, and shuttle buses* experience rapid automation, impacting employment in these sectors. Early trials by companies like Waymo in San Francisco and Phoenix pave the way for greater adoption by numerous firms and cities worldwide. Larger autonomous vehicles, meanwhile – such as trucks, trains, ships, and even aircraft – also begin shifting to greater autonomy during this period, though initially at a slower pace.

Manufacturing, already heavily automated since the late 20th century, sees continued and accelerated replacement of workers by ever more versatile and adaptable robots, boosted by breakthroughs in machine learning, dexterity, and computer vision. By 2035, advanced humanoid and industrial robots can perform a wide range of complex assembly tasks, displacing large numbers of traditional manufacturing roles in many sectors. Other areas are seeing widespread adoption of robots during this time, such as catering, elderly care, hospitals, security patrols, and domestic environments. As the 2030s unfold, companies find robots increasingly cost-effective, reliable, and scalable compared to human workers.

By 2040, the cumulative impacts of automation, robotics, and the widespread adoption of AGI have caused a marked decline in global labour force participation. From over 70% in 1990,* the percentage of working-age (15–64) people in employment had already fallen to 67% by 2025. This downward trend is now accelerating significantly, with potential to reach as low as 41% by 2050. Industries such as transport, manufacturing, administrative services, retail, catering and food services, finance, and healthcare face large-scale job losses.

Initially, the rising unemployment triggers widespread social instability, protests, and political crises around the world. Traditional labour unions, now with diminished leverage over employers, are weakened, and governments struggle to respond effectively. The increasingly rapid pace of "job churn" – constant shifts in employment and skill demands – adds to the upheaval, with many workers unable to retrain fast enough or transition to new roles. Wealth inequality worsens, fuelling populist and anti-technology sentiments.

To mitigate social unrest and economic stagnation, governments increasingly embrace solutions such as Universal Basic Income (UBI). This concept, which had previously been controversial, gains broader support across the political spectrum as few viable alternatives emerge. Initially trialled on limited scales during the 2010s and 2020s, UBI schemes become vital for maintaining economic stability and consumer spending as job losses mount from 2030 onward. While UBI becomes widespread, some countries alternatively or additionally expand public services or adopt targeted approaches such as guaranteed minimum income, negative income tax, universal basic services, or job guarantee schemes.

Countries implement UBI differently based on cultural and economic factors. In many European nations, comprehensive welfare states gradually transform their existing entitlement programmes into streamlined UBI systems. The United States is slower to adapt, facing initial resistance due to extreme ideological divisions. However, a bipartisan consensus ultimately emerges – driven by urgent necessity, mass unemployment, and intensifying unrest. Its eventual version of UBI is shaped more by market-oriented values, heavily influenced by Silicon Valley lobbying, and funded in part by taxes on Big Tech. Payments are often delivered through digital platforms, with eligibility sometimes tied to participation in online upskilling programmes.

These income systems help not only to stabilise societies economically, but also to provide individuals with resources for retraining, education, entrepreneurship, and creative endeavours. While implementation varies by country, UBI becomes a crucial pillar of social policy in the face of mounting technological disruption.

Despite the short-term upheaval and challenges posed by mass unemployment, the widespread adoption of UBI – combined with continued progress in automation, artificial intelligence, and robotics – lays the groundwork for a future in which individuals can pursue meaningful work, lifelong learning, or leisure on their own terms. By 2050, humanity is shifting towards a more creative and resilient economic model, supported by automation but increasingly focused on human wellbeing. Technological unemployment, initially seen as a crisis, gradually becomes recognised as a transitional phase in the evolution toward greater individual freedom and social cohesion.

2025-2035

All television is becoming Internet-based

During this period, cable TV and other traditional broadcast models are increasingly marginalised in favour of Internet-based streaming. The inflexibility of scheduled programming had made linear television less attractive, with viewers shifting instead towards on-demand services offering greater choice, convenience, and value for money. By the late 2010s, more people were already streaming video online each day than watching scheduled linear TV.* This trend continued into the following two decades,* resulting in a substantial loss of subscribers for traditional media companies,* which were forced to adapt their business models or risk long-term decline.

In Britain, the traditional television licence fee (£157 annually, as of 2020) had been called into question, with many arguing that it should no longer be mandatory for all television owners. A Royal Charter guaranteed licence fee funding until 2026, while the Conservative government explored alternative methods of financing the BBC in the future.* Proposals included increased reliance on advertising revenue, the introduction of a new broadcasting levy, and a shift towards subscription-based models. However, successive Royal Charters continued to extend the licence fee's use until at least the late 2020s.

The visual quality of television sets, tablets, and other viewing devices is markedly improving compared to previous generations, with 8K resolutions becoming increasingly affordable and widespread over time. Connection speeds are rising in parallel, able to accommodate the continued growth in online video. By 2035, the global average broadband speed exceeds 1 Gbit/s,* with early adopters and high-end users accessing substantially faster connections.

Access and coverage also continue to improve through expanded rural and remote networks, wider availability of public Wi-Fi, and the rapid growth of satellite constellations.* With a growing majority of the world now coming online, the resulting flow of information contributes to greater awareness of political issues, corruption, and injustice. Citizens in even the poorest regions can document and share their experiences using mobile devices, capturing and disseminating video evidence of events such as war crimes and human rights abuses.*

2025-2030

Many cities are banning fossil fuel-powered vehicles

During this period,* many cities and regions around the world enforce outright bans on the use of traditional petrol and diesel-powered vehicles. This is primarily to meet climate targets under international agreements such as the Kyoto Accord and the Paris Agreement, but is also for reasons of energy independence and improved air quality.

Among the first places to announce bans were Athens, Madrid, Paris and Mexico City. In December 2016, the mayors of each city pledged to take diesel cars and vans off their roads by 2025. Over the next few years, many more plans were announced for partial (diesel only) or complete bans (both gasoline and diesel) in more than 20 countries. The vast majority would cover the 2025-2030 timeframe, with some being implemented sooner (e.g. 2020 for Oxford, UK) and a few others later (e.g. 2040 for China, France and the UK). For these 'outliers' in 2040, it was subsequently suggested that these timelines were not ambitious enough and should be brought forward.

By the early 2020s, a flood of additional countries had joined this planned phase out. With zero-emission vehicles now cheaper than ever, their numbers were growing exponentially, regardless of any bans or regulations. Batteries had been the main reason why electric cars were more expensive than their internal combustion engine (ICE) counterparts, but these prices have declined at such a rate that the overall price balance has flipped by the late 2020s. The relative cost difference continues to widen each year, making them the preferred option from now on. Many cities are beginning to see a noticeable improvement in air quality.

Kivalina faces relocation as erosion intensifies

Kivalina, a small Alaskan village on a 12 km (7.5 mi) long barrier island, continues to experience rapid erosion and rising sea levels. Home to approximately 400 Iñupiat residents, the community relies on hunting and fishing traditions passed down for generations. However, a warming Arctic now threatens their way of life.

Temperatures in Alaska are increasing at twice the rate of the rest of the United States, causing Arctic sea ice to retreat dramatically. This loss of natural protection leaves Kivalina exposed to powerful storms and relentless waves. The US Army Corps of Engineers built a defensive wall to slow the damage, but it provides only a temporary reprieve. Coastal erosion is now encroaching on the village faster than expected, with some structures at risk of collapse into the sea.

Efforts to relocate the community are gaining urgency during this period.* After years of debate and delays, plans for a new settlement on the mainland are showing renewed momentum, but progress remains slow due to limited funding and logistical challenges. Meanwhile, the melting ice is opening up controversial opportunities for oil and gas extraction in the region, raising questions about the balance between economic development and environmental protection.*

Kivalina's plight highlights the broader struggles faced by Indigenous Arctic communities, who remain on the frontlines of climate change.

2025-2028

The United States is becoming more authoritarian

On 6th November 2024, Donald Trump defeated the Democratic candidate Kamala Harris in the US presidential election. Trump became the first Republican nominee since 2004 to win the popular vote, and the first president to be elected to a non-consecutive second term in 132 years, when Grover Cleveland won the 1892 election.

Trump's campaign incited outrage with its barrage of false statements and divisive rhetoric – including claims that the 2020 election had been stolen, engaging in anti-immigrant fearmongering, and promoting conspiracy theories. Trump's embrace of far-right extremism, as well as increasingly violent, dehumanising, and authoritarian language against his political opponents, drove historians and scholars to describe his words as fascist, unlike anything a political candidate had ever said in modern U.S. history, and a continued breaking of political norms.

With control over both the executive branch and Congress, Trump's administration quickly set its sights on implementing Project 2025 – a sweeping blueprint for consolidating power within the federal government. Published by The Heritage Foundation, America's most influential conservative think tank, and co-authored by 140 former Trump staffers, Project 2025 outlines a bold vision to reshape the country's governance. The plan grants the president unprecedented control over federal agencies, reduces regulatory oversight, and strips protections across various sectors. Through this initiative, the executive branch seeks to dismantle the so-called "deep state" and replace thousands of career civil servants with loyalists, prioritising those aligned with Trump's ideological vision. Critics warn that these changes could weaken democratic institutions and erode checks and balances, letting the president operate with fewer constraints and less accountability.

One of the Trump administration's first major actions is a sweeping programme of mass deportations targeting undocumented immigrants. With promises to remove up to 10 million undocumented residents from the United States, the administration moves swiftly to expand Immigration and Customs Enforcement (ICE) operations, increase detention centre capacity, and deploy resources to accelerate deportation proceedings. The goal, officials claim, is to secure the nation's borders, reduce crime, and prioritise jobs for American citizens.

In addition to the mass deportations, Project 2025 includes proposals to denaturalise certain immigrants – a process that revokes the U.S. citizenship from naturalised immigrants deemed "problematic" or "disloyal" – thus rendering them deportable. Although historically a rare practice and highly controversial, denaturalisation enters mainstream policy discussions during this time, with potential targets including individuals from certain countries, those with specific political affiliations, or those found to be overly critical of the administration.

However, the scale of this deportation effort is hampered by formidable challenges, with projected costs running into the hundreds of billions, alongside a number of legal, logistical, and operational issues.* Industries such as agriculture, construction, and hospitality – which rely heavily on immigrant labour – face the potential for major workforce shortages and supply chain disruptions, triggering a backlash from business leaders. Legislative progress also stalls, as even some conservative lawmakers express concerns over the programme's economic and social implications. Civil rights organisations file a series of legal challenges, complicating and delaying the rollout, with courts ruling in some cases that specific aspects of the programme violate the constitution.

Despite these hurdles, Project 2025's centralised structure allows the administration to push forward elements of the initiative by bypassing certain safeguards and circumventing state-level protections. Together with ongoing issues over the Mexico border wall, started during Trump's first presidency, these deportations become highly contentious and polarising. Increasingly frequent reports emerge of human rights abuses, particularly at detention centres, such as overcrowding, poor sanitation, and lack of care.

In addition to immigration reform, Project 2025 includes a series of measures aimed at expanding federal surveillance and tightening control over civil liberties. Under the guise of "national security," Trump's administration proposes enhanced monitoring tools for tracking suspected threats – targeting political opponents, activists, and minority communities. The programme extends to online spaces, where authorities implement intensified monitoring of social media platforms, forums, and independent news websites to detect and flag "subversive" content. These expanded surveillance capabilities raise concerns about the potential targeting of individuals who openly criticise the administration or express dissenting views.

New policies also seek to curtail press freedom with stricter regulations on media outlets deemed "unpatriotic" or "biased." As part of this effort, the Trump administration moves to defund public broadcasting entities like NPR and PBS. Journalists critical of the administration face heightened scrutiny, with some even threatened with legal action.

Meanwhile, Project 2025 empowers federal law enforcement with broader authority to suppress protests, even going as far as deploying the military to break up such gatherings. This marks a significant departure from American norms, which historically upheld free assembly and civil disobedience as protected rights, drawing comparisons to hardline regimes like China and Russia.

Adding to concerns over civil liberties, Project 2025 mandates that states provide abortion data, ending what had previously been a voluntary reporting system.* States must now report on the number of abortions performed, gestational age at the time of the procedure, reasons for each abortion, the pregnant person's state of residence, and method used. To enforce compliance, the administration threatens to halt federal funding for states that do not supply the required data. As surveillance of pregnancy outcomes intensifies, this data is weaponised against individuals in states hostile to abortion rights, criminalising those seeking or assisting with abortions. The prospect of a nationwide abortion ban moves closer to reality, with grave implications for women's health and well-being.

Project 2025 also envisions a rollback of protections for LGBT individuals, proposing measures that remove federal recognition of gender diversity and eliminate protections against discrimination in healthcare, housing, and employment. The plan aims to reinstate narrow definitions of gender and sex, erasing policies that provide access to gender-affirming healthcare and protect individuals from discrimination based on sexual orientation or gender identity. Critics argue that this marks a dangerous regression in civil rights, targeting people in ways that parallel the restrictive laws seen in authoritarian states that enforce social conformity. The impact of these policies threatens to marginalise LGBT individuals further, eroding gains made in recent decades and enforcing a rigid, state-sanctioned ideology around gender and sexuality.

Overall, the combination of intensified surveillance, restrictions on reproductive rights, rollback of LGBT protections, expanded police and military powers, and the deportation of political dissidents creates a troubling precedent for free speech, privacy, and civil liberties, marking a shift towards a more authoritarian state.

Project 2025 includes many other proposals – affecting education, employment, the environment, welfare programs, and much more* – signalling a decisive rightward turn in policymaking. As it begins to reshape American governance, concerns grow that the 2024 election may have been the last truly free and fair presidential contest in the United States. The consolidation of executive power lays a strong foundation to influence or restrict any future democratic processes. With echoes of tactics employed by autocratic leaders such as Vladimir Putin, observers warn that democratic safeguards Americans have long taken for granted may weaken further, creating a turbulent road to the 2028 election and beyond.

2025

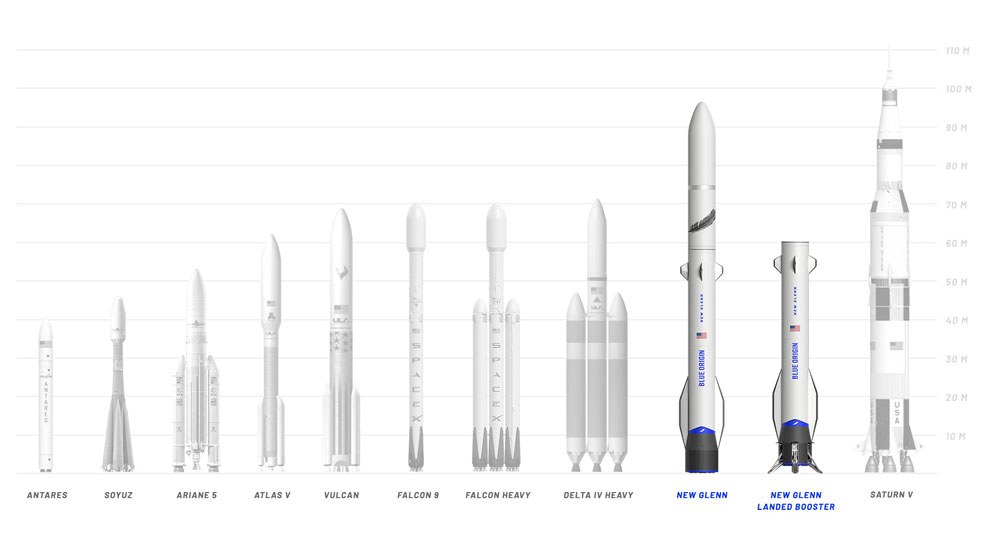

First flight of the New Glenn reusable rocket

New Glenn (named after the late U.S. astronaut, John Glenn) is a heavy-lift orbital launch vehicle developed by Blue Origin, the aerospace company founded by Amazon boss Jeff Bezos. The booster stage is designed to be reusable, cutting launch costs and making it a competitor to SpaceX.

Previously, Blue Origin had developed the New Shepard – a vertical-takeoff, vertical-landing (VTVL), crew-capable rocket. Prototype testing in 2006, followed by full-scale engine development in the early 2010s, led to a first flight in 2015. Reaching an altitude of 93 km (58 miles), this uncrewed demonstration was deemed partially successful, as the onboard capsule was recovered via parachute landing, while the booster stage crashed, and was not recovered. By 2019, a further 11 test flights had taken place, all successfully landing and recovering the booster stage.

The New Shepard, with a height of 18 m (59 ft) and only a tiny payload, fell into the sub-orbital class of rockets. By contrast, its successor would be more than five times as tall on the launch platform. New Glenn, standing 98 m (322 ft), dwarfed the earlier New Shepard and was designed to carry 45,000 kg (99,000 lb) to low-Earth orbit (LEO) and 13,600 kg (30,000 lb) to geosynchronous transfer orbit (GTO).

Blue Origin began working on the New Glenn in 2012, and publicly revealed its design and specifications in 2016. The vehicle, described as a two-stage rocket with a diameter of 7 m (23 ft), would be powered by seven BE-4 engines (equivalent to 21 Boeing 747s). Bezos now reportedly sold $1 billion worth of Amazon.com stock annually – a figure that doubled by the end of the decade – in order to fund Blue Origin.

By 2019, Blue Origin had gained five customers for New Glenn flights, including a multi-launch contract with Telesat for its broadband constellation. All of these launches would feature a reusable first stage, meaning the booster would return to Earth and land vertically, just like the New Shepard sub-orbital launch vehicle that preceded it. New Glenn faced a number of delays, however, with its launch repeatedly postponed.

In early 2023, NASA selected Blue Origin as the contractor to launch the Escape and Plasma Acceleration and Dynamics Explorers (EscaPADE) – a pair of smallsats for studying the solar winds and magnetosphere around Mars – delivered as payloads on the New Glenn.

Following much speculation over the launch date, New Glenn's inaugural flight took place on 16th January 2025, lifting off from Cape Canaveral Space Force Station in Florida.* The rocket's seven BE-4 engines fired on schedule and the vehicle successfully reached orbit, delivering its payload into medium Earth orbit and achieving the mission's primary objective. However, the reusable first stage failed to land on the downrange platform. Subsequent missions later improved on this outcome, with the first successful booster return and landing occurring on New Glenn's second flight in November 2025, which also deployed NASA's twin EscaPADE spacecraft bound for Mars.

First light for the Vera C. Rubin Observatory

The Vera C. Rubin Observatory – formerly known as the Large Synoptic Survey Telescope – enters a pivotal stage in 2025. This huge, state-of-the-art project, funded by the US National Science Foundation and Department of Energy, achieves 'first light' – the moment when a telescope captures its first usable images – and begins full scientific operations. This milestone follows 24 years of development that began with a proposal in 2001, fabrication of the mirror starting in 2007, and site construction from 2015 onwards.

Situated on Cerro Pachón, a mountain in Chile, the facility houses an 8.4-metre optical telescope, and the most powerful digital camera ever built for astronomy, with an astonishing resolution of 3.2 gigapixels. The camera captures images using six filters that span the optical electromagnetic spectrum, from violet to the edge of infrared.

Credit: SLAC National Accelerator Laboratory

The observatory is designed to survey the entire visible sky every few nights, offering a dynamic and comprehensive view of the cosmos that promises transformative discoveries. Its main scientific goals include:

• Studying dark energy and dark matter by measuring weak gravitational lensing, baryon acoustic oscillations, and photometry of Type Ia supernovae, all as a function of redshift.

• Mapping small objects in the Solar System, particularly near-Earth asteroids, and Kuiper Belt objects, increasing the number of catalogued objects by a factor of 10–100. It will also aid in the search for the hypothesised Planet Nine.

• Detecting transient astronomical events, including novae, supernovae, gamma-ray bursts, quasar variability, and gravitational lensing, while providing prompt event notifications to facilitate follow-up observations.

• Mapping the Milky Way, refining knowledge of its structure, star clusters, and interstellar dust.

With its fast-moving telescope and high sensitivity, Vera Rubin can alert operators to changes in the night sky within 60 seconds, helping researchers quickly plan and deploy missions to track rapidly moving objects of interest. For example, only two interstellar objects had been observed before 2025 – 'Oumuamua (2017) and 21/Borisov (2019) – but as many as 70 can be tracked by Vera Rubin each year.*

By conducting a deep survey over the entire night sky, every few nights for ten years, the observatory obtains astronomical catalogues that are orders of magnitude larger than any previously compiled. Approximately 20 billion galaxies and 17 billion stars are identified, each with 200 attributes, a dramatic increase from the "only" six million galaxies and 1.7 billion stars known before 2025.

Data and images totalling more than 500 petabytes are generated by the Vera Rubin Observatory's huge camera, with subsequent analysis helping to address some of the most pressing questions about the structure and evolution of the universe and the objects within it.

Rubin Observatory/NSF/AURA/A. Pizarro D. (Creative Commons Attribution 4.0 International License / CC BY 4.0)

Sample return mission to Kamo'oalewa

In 2025, China launches a sample return mission to the near-Earth asteroid 469219 Kamoʻoalewa.* This tiny, fast-rotating body is just 41 m (135 ft) in diameter and is the second-smallest, closest, and most stable known quasi-satellite of Earth. Its orbit, and the lunar-like silicates it contains, make it likely to be an impact fragment from the Moon. The mission provides confirmation of this, along with further science data when it returns the sample.

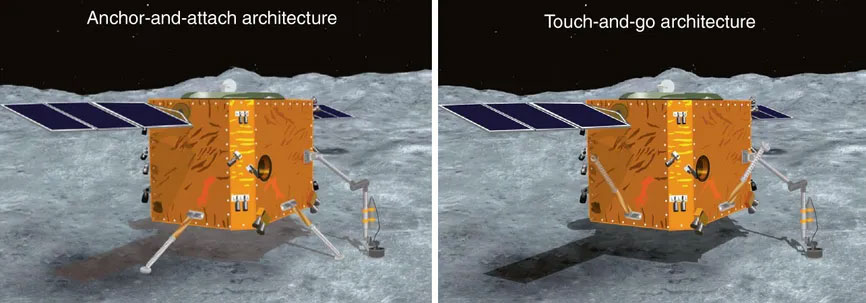

The technical challenges include entering and keeping orbit around a small body with very weak gravity. The spacecraft requires long-life propulsion engines and a high-precision navigation, guidance, and control system. The return capsule must also withstand ultra-high-speed re-entry into Earth's atmosphere.

China develops two mission architectures – "anchor-and-attach" and "touch-and-go" – using both to maximise the chances of success. It lands on the asteroid using four robotic arms, with a drill on the end of each for anchoring.

After delivering samples to Earth in a return capsule, the probe continues on towards the main-belt comet 311P/PANSTARRS. On arrival in 2034, it uses a variety of imaging, spectrometer, and other instruments to investigate whether such a comet may have delivered water to the young Earth. It also provides insight into the differences between active asteroids and classic comets.*

Credit: Tao Zhang, Kun Xu, and Xilun Ding/Nature Astronomy

Construction of the Gordie Howe Bridge is completed

Among the most notable infrastructure projects to reach construction completion in 2025 was the Gordie Howe Bridge,* linking Detroit in the United States with Windsor in Canada. This modern crossing was needed to relieve immense pressure on the historic Ambassador Bridge further east. The latter, built in 1929, had long struggled with heavy congestion and bottlenecks for both freight and passenger traffic.

The new bridge was designed to provide a major boost in capacity for one of the most congested trade corridors in North America – a region handling nearly 30% of all goods travelling between the two countries, totalling around US$150 billion each year. Its construction would reduce lengthy border delays and enable dramatically improved journey times.

A proposal for the Gordie Howe Bridge emerged in 2004, with a joint announcement by the governments of the United States and Canada. The project's funding would involve a blend of public and private investment on both sides of the border. After years of environmental assessments and legal wrangling, officials broke ground in 2018 and began pushing forward with construction. During its development, the bridge faced significant opposition from billionaire Manuel Moroun, owner of the Ambassador Bridge, who attempted to block the project in courts to protect his lucrative toll revenues.

Despite these challenges, workers completed construction of the Gordie Howe Bridge in late 2025, only slightly behind schedule due to COVID-19, with final commissioning and opening to traffic scheduled for early 2026. Its state-of-the-art design includes a total of six lanes for automotive traffic, a bicycle lane, and a footpath, as well as sophisticated inspection facilities to expedite border processes. The cable-stayed bridge consists of two A-shaped towers, standing 220 m (722 ft) tall, built on opposite banks of the Detroit River. The road deck itself is held up using 216 cable stays. It has the longest main span of any cable-stayed bridge in North America, at 853 m (2,800 ft), with a total bridge length of 2.5 km (1.6 mi).

The wider road network around the bridge has seen upgrades too, ensuring smooth connections to major highways. As well as cutting congestion and pollution, the project created thousands of local jobs during construction. Its completion paves the way for stronger economic ties between the United States and Canada in the decades ahead, supported by a 30-year maintenance and operations agreement under its public-private partnership. The bridge is expected to remain a vital infrastructure asset well into the future, with a projected lifetime of at least 125 years.

Click to enlarge

Credit: Windsor-Detroit Bridge Authority

QuEra demonstrates a 3,000-qubit quantum system

Quantum computing – a new and more powerful way of processing information – has steadily evolved over recent decades, moving from theoretical concepts to experimental machines capable of tackling problems far beyond the reach of classical supercomputers.

At the heart of this progress lies the qubit, or 'quantum bit', the fundamental building block of quantum computers. As qubit counts grow, so too does the potential computational power of these devices. Even relatively modest increases in scale can allow researchers to explore calculations involving astronomically large numbers, opening pathways to applications in cryptography, drug discovery, materials science, and more.

In 2023, IBM became the first organisation to exceed 1,000 qubits with its 1,121-qubit Condor processor. Atom Computing extended this lead in 2024 with a system reaching 1,180 qubits. Alongside rising qubit counts, attention increasingly shifted toward coherence, connectivity, and error correction – factors essential for scaling practical quantum systems.

Founded in 2019 by Harvard and MIT alumni, Boston-based QuEra Computing had previously developed its 256-qubit Aquila system, introducing local qubit control within a neutral-atom architecture. Building on this foundation, QuEra achieved a major milestone in 2025 by demonstrating a neutral-atom quantum system containing 3,000 physical qubits in laboratory conditions.* The system used precisely arranged arrays of individual atoms, held in place by lasers and manipulated to act as qubits.

In parallel with scaling physical qubit counts, QuEra and its collaborators demonstrated early forms of logical qubits by encoding quantum information across multiple physical qubits to suppress errors. Although still experimental and not yet suitable for fault-tolerant computation, these demonstrations marked an important step toward practical error correction in large-scale quantum hardware.

Together, these advances placed QuEra among the leading organisations pushing quantum computing beyond the thousand-qubit threshold. While such systems remained experimental rather than commercial products, the 3,000-qubit milestone highlighted the rapid pace of progress in neutral-atom quantum technologies and underscored the feasibility of scaling quantum hardware to previously unreachable sizes.

« 2024 |

⇡ Back to top ⇡ |

2026 » |

If you enjoy our content, please consider sharing it:

References

1 AI to disrupt creative jobs in the near future, Future Timeline Blog:

https://futuretimeline.net/blog/2024/02/3-ai-creative-jobs-disruption-future-timeline.htm

Accessed 23rd March 2025.

2 8 million UK jobs at risk from AI, Future Timeline Blog:

https://futuretimeline.net/blog/2024/03/30-uk-ai-8-million-jobs-risk-future.htm

Accessed 23rd March 2025.

3 Introducing deep research, OpenAI:

https://openai.com/index/introducing-deep-research/

Accessed 23rd March 2025.

4 When do experts expect AGI to arrive?, 80,000 hours:

https://80000hours.org/2025/03/when-do-experts-expect-agi-to-arrive/

Accessed 24th March 2025.

5 Autonomous driving's future: Convenient and connected, McKinsey:

https://www.mckinsey.com/industries/automotive-and-assembly/our-insights/autonomous-drivings-future-convenient-and-connected

Accessed 23rd March 2025.

6 "...by 2040 autonomous robotaxis will be widespread in areas across the U.S., potentially making up around 50% of available taxis by that year."

See Tesla Stock Rebounds As The Robotaxi Approaches; Waymo Tops 100,000 Weekly Fares, Investor's Business Daily:

https://www.investors.com/news/tesla-stock-robotaxi-event-waymo-uber-lyft/

Accessed 23rd March 2025.

7 Robot Shuttles and Autonomous Buses Market Size, Year 2033, Spherical Insights:

https://www.sphericalinsights.com/reports/robot-shuttles-and-autonomous-buses-market

Accessed 23rd March 2025.

8 ILOSTAT data explorer, International Labour Organization (ILO):

https://rshiny.ilo.org/dataexplorer28/?lang=en

Note: The ILO has since deleted the global data covering 1990–2025, but you can view an archived copy here:

https://www.futuretimeline.net/21stcentury/spreadsheets/ilo-labour-force-participation-rate-1990-2025.xlsx

Accessed 24th March 2025.

9 We're about to pass a watershed moment in the decline of TV, Tech Insider:

http://www.techinsider.io/streaming-will-soon-pass-traditional-tv-2015-9

10 Netflix CEO Reed Hastings predicts when cable TV will die for good, Business Insider:

https://www.businessinsider.com/netflix-ceo-says-all-tv-will-be-on-internet-in-10-to-20-years-2015-9

Accessed 10th June 2016.

11 This is the scariest chart in the history of cable TV, Business Insider:

https://www.businessinsider.com/cable-tv-subscribers-plunging-2015-8

Accessed 10th June 2016.

12 BBC: '10 years left of licence fee', BBC News:

http://www.bbc.co.uk/news/entertainment-arts-33215141

Accessed 10th June 2016.

13 Global average Internet speed, 1990-2050, Future Timeline > Data & Trends:

https://futuretimeline.net/data-trends/2050-future-internet-speed-predictions.htm

Accessed 4th January 2026.

14 Largest satellite constellations, Future Timeline > Data & Trends:

https://www.futuretimeline.net/data-trends/23-largest-satellite-constellations.htm

Accessed 4th January 2026.

15 New mobile app could revolutionise human rights justice, Future Timeline Blog:

https://www.futuretimeline.net/blog/2015/06/8-2.htm

Accessed 10th June 2016.

16 Phase-out of fossil fuel vehicles, Wikipedia:

https://en.wikipedia.org/wiki/Phase-out_of_fossil_fuel_vehicles

Accessed 21st January 2024.

17 The Alaskan village set to disappear under water in a decade, BBC:

http://www.bbc.co.uk/news/magazine-23346370

Accessed 30th July 2013.

18 "...the USACE estimates that Kivalina will be uninhabitable by 2025, and the Government Accountability Office recommends that Kivalina's 400 residents leave immediately."

See Climate Change Is Driving Residents of Kivalina From Their Homes, Sierra:

https://www.sierraclub.org/sierra/climate-change-driving-residents-kivalina-their-homes

Accessed 15th January 2024.

19 Trump's mass deportation plans would be costly. Here's why, CNN:

https://edition.cnn.com/2024/10/19/politics/trump-mass-deportation-cost-cec/index.html

Accessed 10th November 2024.

20 Project 2025: What It Means for Women, Families, and Gender Justice, National Women's Law Center:

https://nwlc.org/wp-content/uploads/2024/09/Project-2025-Full-Report.pdf

For archived version, see:

https://www.futuretimeline.net/21stcentury/pdfs/project-2025-full-report.pdf

Accessed 10th November 2024.

21 Policy Agenda, Project 2025:

https://www.project2025.org/policy/

Accessed 10th November 2024.

22 New Glenn, Wikipedia:

https://en.wikipedia.org/wiki/New_Glenn

Accessed 13th December 2025.

23 Vera Rubin Observatory could find up to 70 interstellar objects a year, Phys.org:

https://phys.org/news/2023-11-vera-rubin-observatory-interstellar-year.html

Accessed 27th December 2024.

24 469219 Kamoʻoalewa, Wikipedia:

https://en.wikipedia.org/wiki/469219_Kamo%CA%BBoalewa

Accessed 18th November 2021.

25 China Plans Near-Earth Asteroid Smash-and-Grab, IEEE Spectrum:

https://spectrum.ieee.org/china-plans-near-earth-asteroid-smash-and-grab

Accessed 18th November 2021.

26 Top 25 Construction Megaprojects of 2025, YouTube:

https://youtu.be/pJ3ATTY13qo?si=DtuPOvr5vic5KRGL&t=417

Accessed 7th January 2025.

27 QuEra Computing Marks Record 2025 as the Year of Fault Tolerance and Over $230M of New Capital to Accelerate Industrial Deployment, QuEra:

https://www.quera.com/press-releases/quera...

Accessed 2nd January 2026.

![[+]](https://www.futuretimeline.net/images/buttons/expand-symbol.gif)