|

|

|

|

|

|

2030-2033

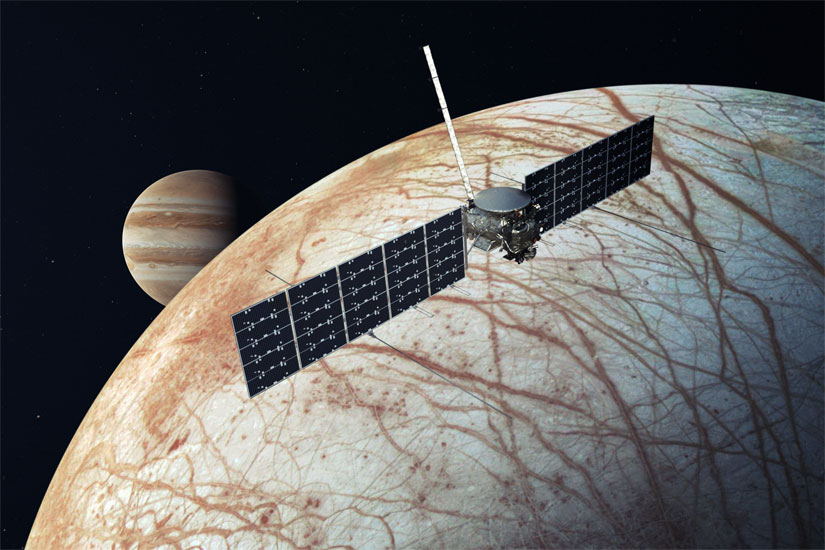

NASA's Europa Clipper mission searches for life

The Europa Clipper is a NASA probe sent to study Europa, the smallest of the four Galilean moons orbiting Jupiter. As a Flagship-class mission, it is among the costliest and most capable science spacecraft to be launched in the agency's history.*

The uncrewed spacecraft departs from Earth in October 2024 aboard a Falcon Heavy, during a 21-day launch window. It utilises gravity assists from Mars in February 2025 and Earth in December 2026, before arriving at Europa in April 2030.*

The probe is designed to observe Europa, determine its habitability and aid in the selection of a landing site for a future lander. The science goals are focused on the three main requirements for life: liquid water, chemistry, and energy. Specifically, the objectives are to study:

- Ice shell and ocean: Confirm the existence and nature of water, within or beneath the ice, and processes of surface-ice-ocean exchange

- Composition: Determine the chemistry and distribution of key compounds and the links to ocean composition

- Geology: Determine the characteristics and formation of surface features, including sites of recent or current activity

To achieve these goals, a large scientific payload of nine instruments is contributed by the Jet Propulsion Laboratory (JPL), as well as various research institutes and universities. The electronic components are protected from Jupiter's intense radiation by a 150 kg shield made of titanium and aluminium. They include a topographical imager, ice-penetrating radar, thermal spectrometer, magnetometer, neutral mass spectrometer and high-gain antenna. Extremely high-resolution photos are made possible by the main imaging system. This maps most of Europa at 50 m (160 ft) resolution, but can also zoom into selected surface areas. These enhancements reveal details as small as 0.5 metres (1.6 ft) in size.

The probe conducts 45 flybys of Europa at distances ranging from 2,700 km (1,678 mi) to as close as 25 km (16 mi) during its 3.5-year mission. It can therefore reach altitudes low enough to pass through plumes of water vapour erupting from the moon's ice crust, obtaining samples for analysis.

A key feature of the mission plans is that the Clipper uses gravity assists from Europa, Ganymede and Callisto to change its trajectory, allowing the spacecraft to return to a different close approach point with each flyby. Each flyby covers a different sector of Europa in order to produce a near-global (95%) topographic survey including ice thickness.

The mission timeline overlaps with ESA's Jupiter Icy Moons Explorer (JUICE), which studies the Jovian system from 2031 to 2035, performing flybys past Europa and Callisto before moving into orbit around Ganymede. The two missions complement each other – with shared data helping to improve the science surrounding the moon's crust and subsurface ocean (the latter is believed to contain more water than all of Earth's oceans combined), as well as guiding the development of future surface landers in the 2030s and 2040s.

Credit: NASA/JPL-Caltech

2030

Global warming continues to increase

Global warming in 2030 is now approaching 1.3°C (2.3°F) above the mid-20th century average and continues to increase.* During the next El Niño, it will exceed the 1.5°C (2.7°F) threshold agreed at the Paris Climate Accords in 2015.

Recent years have seen unprecedented heatwaves, droughts, floods, wildfires, tropical cyclones, and other disasters around the world. The economic losses from weather-related events in 2030 are 25% greater than just a decade earlier, averaging more than $250 billion annually.*

Amid the deepening sense of urgency, environmental activism is now ramping up to levels never seen before. This includes the direct targeting and sabotage of fossil fuel infrastructure, alongside increasingly vociferous campaigns to trigger changes in public behaviour and lifestyles. In the early 2020s, protest actions had already been subject to increasing criminalisation in some countries. With more radicalised green movements having since emerged, these measures are now going a step further to classify some groups as outright terrorist organisations. In South America and other regions, environmental activists who stand in the way of agribusiness, hydroelectric dams, mining, and logging are being murdered in greater numbers than ever.

Following the COVID-19 pandemic, worldwide greenhouse gas emissions had seen a temporary dip. From a record high of 52 gigatonnes (Gt) of CO2 equivalent (meaning the aggregate of CO2, CH4, N2O and F-gases), they fell below 49.5 Gt in 2020. However, growth resumed in subsequent years and soon rebounded to pre-pandemic levels. World leaders announced more ambitious climate targets, while simultaneously approving new oil and gas projects and continuing to provide enormous subsidies to the fossil fuel industry.

Although progress has been made in slowing the rate of emissions – with signs that a peak may now be approaching – they continue to rise in 2030.* Pledges at climate summits have proven to be insufficient for limiting the global average temperature to a sustainable level. The world remains on track for 2°C of warming by the 2040s and nearly 3°C later in the century.

The decarbonisation of the world's various economic sectors has been uneven.* Some areas have seen exponential improvement – such as the rapid adoption of solar PV, wind power, electric vehicles (EVs), and charging stations. Some governments have now phased out coal, while renewables have expanded to cover a majority of electrical generation, in combination with utility-scale batteries and/or nuclear. Rooftop solar, for example, is now mandatory on all new buildings in some countries. Likewise, the EV market has seen phenomenal growth and is on the verge of surpassing traditional ICE cars in terms of global sales.

Another promising field is direct air capture (DAC), to create "negative" emissions. Early prototypes in the 2010s demonstrated that a few tonnes each day could be captured and stored safely. Substantial further investment, including billions of dollars from both private interests and government funding, led to a rapid scaling up. By 2030, some companies have achieved megatonne-scale DAC* and are now planning for gigatonnes of negative emissions by 2050.

But in other areas of the economy, progress has been stagnant. For instance, ruminant meat consumption is still very high, in part due to growing consumer demand in emerging economies. Plant-based meats have risen in popularity, but are nowhere near the level needed to sufficiently reduce emissions from the agricultural sector. Meanwhile, cultured (or "lab-grown") meats, while showing potential for exponential growth, are still at the early stages of market adoption. They have also been drawn into the Anglosphere's culture wars, with debates over "natural vs unnatural" that are similar to concerns over GM foods, as well as the financial impact on local ranchers.

Other areas with insufficient progress include the energy intensity of residential and commercial buildings, the carbon intensity of industrial processes such as steelmaking and cement production, the aviation sector, and the general financing of climate change initiatives around the world. For many countries, the vague wording of Net Zero strategies has led to delays in overall emissions reductions.

The share of greenhouse gas emissions with carbon pricing has about doubled since 2020 but still accounts for only 40% of the total. The two main forms of this pricing system are carbon taxing and cap-and-trade programs. A third concept has recently been proposed: a "carbon coin"* or similar currency to encourage a new global market for carbon removal. If paired with carbon taxes, the idea would be that the burning of carbon is taxed, while sequestering carbon is rewarded. To implement the policy and to manage supply and demand (as well as independently verifying each ton of negative emissions) requires a supranational authority – one of its key functions being to coordinate the operations of major central banks in order to give the carbon currency a guaranteed floor price. For now, this is too big a step for most nations to accept, with concerns over inflation and the effects on domestic currencies. However, discussions are resumed in later years as the global environmental situation deteriorates.

Overall, the world in 2030 is more climate-aware and more supporting of green policies than ever – but still lacking the urgency and scale of actions needed to prevent dangerous climate change.

China's nuclear stockpile exceeds 1,000

From the late 2010s onward, China began to significantly ramp up its nuclear weapons stockpile as a result of several converging factors. Having maintained around 200 warheads for nearly 40 years, the rising superpower sought to modernise and expand this arsenal to more than 1,000, in light of what it perceived as new and emerging threats.

The evolving security dynamics of the Indo-Pacific region – largely influenced by the U.S. military presence and its alliances – prompted the Chinese government to reassess the strength of its nuclear forces. Concerns had also grown about the advances in missile technology by other powers, which had the potential to undermine China's own deterrent.

Between 2015 and 2022, China increased its nuclear weapons stockpile by 60%, from 260 to more than 400. This figure has reached 1,000 by 2030* and is now on track to each 1,500 by 2035. This forms part of President Xi Jinping's longer-term goal of establishing a "world class" military by 2049, the 100th anniversary of the People's Republic of China.

In addition to hundreds of new warheads, China has developed a larger inventory of nuclear delivery systems on land, sea, and air. It has also invested in the infrastructure needed to sustain this growth. New silo fields for both solid-propellant and liquid-fuelled ICBMs have been constructed, with systems now ready for a "launch on warning" posture, or the ability to fire more quickly.

This rapid escalation in nuclear capabilities has heightened the existing tensions between China and its neighbours, notably India, Japan, and Taiwan. These and other nations in the Asia–Pacific, concerned about their strategic vulnerability, are pursuing their own military enhancements.

The United States, in turn, faces new challenges in maintaining strategic stability in the region. Washington's commitments to its allies are being tested, and it must balance deterrence with efforts to prevent an all-out arms race. China's expanded arsenal is complicating arms control negotiations, which now have to account for a tripartite dynamic involving the U.S., Russia, and China. This has undermined efforts to denuclearise the Korean Peninsula.

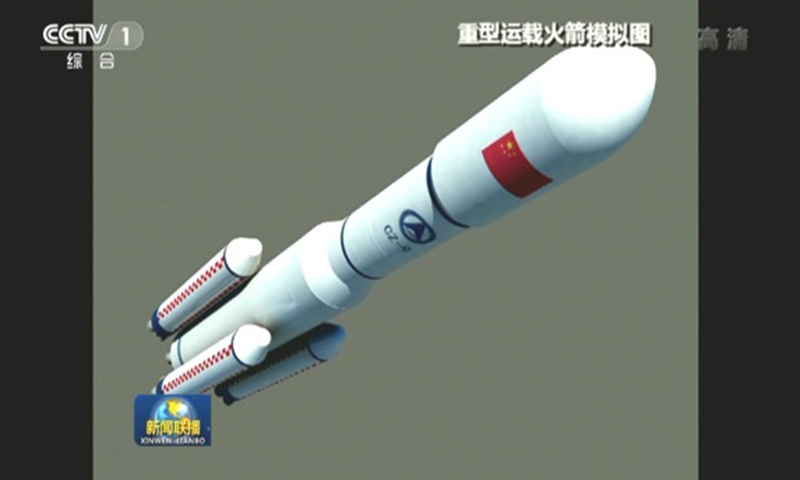

China's Long March 9 rocket begins lunar missions

The Long March 9 (officially CZ-9) is a new Chinese rocket, first announced in 2018 and intended for long range missions to the Moon, Mars and beyond. With a payload capacity of 140,000 kg to low Earth orbit (LEO) and 50,000 kg to trans-lunar injection, it ranks among the largest rockets ever built – one of the very few appearing in the "super heavy-lift" launch vehicle class.

The Long March 9 is a three-staged rocket with a large core having a diameter of 10 metres, surrounded by a cluster of four engines. Comparable in size to NASA's retired Saturn V, this huge rocket is specifically designed to expand China's capabilities beyond Earth and deeper into space. Sitting atop the rocket is a next-generation, lunar-capable spacecraft with capacity for up to six astronauts.

The Long March 9 completed feasibility studies in 2021 and received government approval that same year. The 14th Five Year Plan (2021–25) enabled it to proceed to the next stage of development. By 2030, a maiden test flight has occurred, and the launch vehicle is being prepared for use in lunar missions.* Following additional test flights, China lands its first astronauts on the Moon in the early part of this decade.*

This is taking place alongside similar efforts by the United States, which now has its own lunar-capable rocket – NASA's Space Launch System – as well as commercial ventures such as SpaceX and Blue Origin. The two nations, having been engaged in a second space race for the last two decades, are now finally seeing the fruits of their long-term research and development.

The Long March 9 forms a pivotal part of China's operations on the Moon, not only for sending astronauts on short duration missions but also for establishing a more permanent presence. Its huge cargo-carrying capacity allows a scientific outpost to form on the lunar surface during the late 2030s.

Credit: CCTV

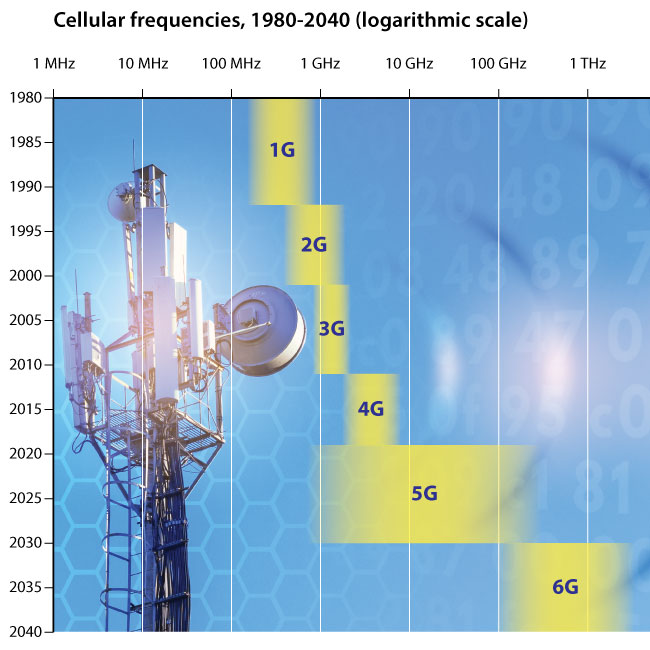

The 6G standard is released

By 2030, a new cellular network standard has emerged that offers even greater speeds than 5G. Early research on this sixth generation (6G) had started during the late 2010s when China,* the USA* and other countries investigated the potential for working at higher frequencies.

Whereas the first four mobile generations tended to operate at between several hundred or several thousand megahertz, 5G had expanded this range into the tens of thousands and hundreds of thousands. A revolutionary technology at the time, it allowed vastly improved bandwidth and lower latency. However, it was not without its problems, as exponentially growing demand for wireless data transfer put ever-increasing pressure on service providers, while even shorter latencies were required for certain specialist and emerging applications.*

This led to development of 6G, based on frequencies ranging from 100 GHz to 1 THz and beyond. A ten-fold boost in data transfer rates would mean users enjoying terabits per second (Tbit/s). Furthermore, improved network stability and latency – achieved with AI and machine learning algorithms – could be combined with even greater geographical coverage. The Internet of Things, already well-established during the 2020s, now had the potential to grow by further orders of magnitude and connect not billions, but trillions of objects.

Following a decade of research and testing, widespread adoption of 6G occurs in the 2030s. However, wireless telecommunications are now reaching a plateau in terms of progress, as it becomes extremely difficult to extend beyond the terahertz range.* These limits are eventually overcome, but require wholly new approaches and fundamental breakthroughs in physics. The idea of a seventh standard (7G) is also placed in doubt by several emerging technologies that support the existing wireless communications, making future advances iterative, rather than generational.*

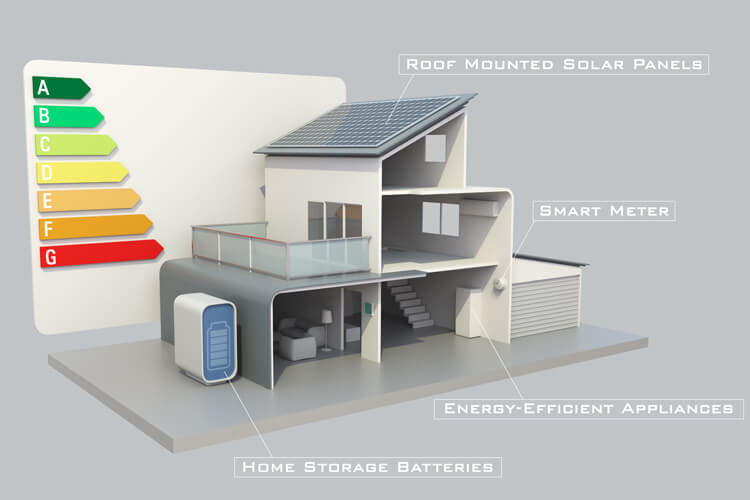

Smart grid technology is widespread in developed nations

In prior decades, the disruptive effects of energy shocks,* alongside ever-increasing demands of growing and industrialising populations, were putting strain on the world's power grids. Blackouts occurred in the worst-hit regions, with consumers becoming more and more conscious of their energy use and taking measures to either monitor and/or cut back their consumption. This already precarious situation was exacerbated by the relatively ancient infrastructure in many countries. Much of the grid at the beginning of the 21st century was extremely old and inefficient, losing more than half of its available electricity during production, transmission and usage. A convergence of business, political, social and environmental issues forced governments and regulators to finally address this problem.

By 2030, integrated smart grids are becoming widespread in the developed world,** the main benefit of which is the optimal balancing of demand and production. Traditional power grids had previously relied on a just-in-time delivery system, where supply was manually adjusted constantly in order to match demand. Now, this problem is being eliminated due to a vast array of sensors and automated monitoring devices embedded throughout the grid. This approach had already emerged on a small scale, in the form of smart meters for individual homes and offices. By 2030, it is being scaled up to entire national grids.

Power plants now maintain constant, real-time communication with all residents and businesses. If capacity is ever strained, appliances instantly self-adjust to consume less power, even turning themselves off completely when idle and not in use. Since balancing demand and production is now achieved on a real-time, automatic basis within the grid itself, this greatly reduces the need for "peaker" plants as supplemental sources. In the event of any remaining gap, algorithms calculate the exact requirements and turn on extra generators automatically.

Computers also help adjust for and level out peaks and troughs in energy demand. Sensors in the grid can detect precisely when and where consumption is highest. Over time, production can be automatically shifted according to the predicted rise and fall in demand. Smart meters can then adjust for any discrepancies. Another benefit of this approach is allowing energy providers to raise electricity prices during periods of high consumption, helping to flatten out peaks. This makes the grid more reliable overall, since it reduces the number of variables that need to be accounted for.

Yet another advantage of the smart grid is its capacity for bidirectional flow. In the past, power transmission could only be done in one direction. Today, a proliferation of local power generation, such as photovoltaic panels and fuel cells, means that energy production is much more decentralised. Smart grids now take into account homes and businesses which can add their own surplus electricity to the system, allowing energy to be transmitted in both directions through power lines.

This trend of redistribution and localisation is also making large-scale renewables more viable, since the grid is now adaptable to the intermittent power output of solar and wind. On top of this, smart grids are also designed with multiple full load-bearing transmission routes. This way, if a broken transmission line causes a blackout, sensors instantly locate the damaged area while electricity is rerouted to the affected area. Crews no longer need to investigate multiple transformers to isolate a problem, and blackouts are reduced as a result. This also prevents any kind of domino effect from setting off a rolling blackout.

Overall, this new "internet of energy" is far more sustainable, efficient and reliable. Energy costs are reduced, while paving the way to a post-carbon economy. Countries that quickly adapt smart grids are better protected from oil shocks, while greenhouse gas emissions are reduced by almost 20 per cent in some nations.* As the shift to clean energy continues, this situation will only improve, expanding to even larger scales. Regions begin merging their grids together on a country-to-country, and eventually continent-wide, basis.

Coal power is phased out by Germany

During the 20th century, Germany obtained its electricity predominantly from fossil fuels (particularly coal) and then later also nuclear power. As Europe's largest consumer of electricity, it had very high carbon emissions, ranking sixth in the world. At the dawn of the 21st century, however, a radical change began to occur as its supply shifted to new, less polluting forms of energy.

In 2010, the German government published Energiewende ("energy transition") a key policy document outlining targets for increasing the share of renewables in power consumption, which included greenhouse gas (GHG) emission reductions of 80–95% by 2050 (relative to 1990).

Following the Japanese Fukushima disaster of 2011, the government removed the use of nuclear power as a bridging technology and the decision was taken further to phase out nuclear altogether by 2023. This move triggered a brief rise in coal, to make up the shortfall. However, renewables were expanding rapidly, with solar and wind forming an ever-larger share of electric generating capacity. In 2019, a government-appointed coal commission introduced a proposed pathway to phase out all coal power within two decades.

By the end of the 2010s, Germany had 40 GW of installed coal power capacity, with 21 GW fired by bituminous coal – referred to as "hard coal" by Germany's Federal Network Agency – and 19 GW by lignite, or "brown coal". A bituminous coal plant, Dattaln 4, entered service in mid-2020, adding 1.1 GW and becoming Germany's last ever coal plant to be newly connected to the grid. The government planned to take all 84 sites offline by 2038.

In September 2021, Germany held federal elections. With Angela Merkel stepping down and the ruling Union parties (CDU/CSU) recording their worst ever result, Olaf Scholz of the Social Democratic Party (SPD) formed a three-way coalition alongside the Free Democratic Party (FDP) and the Greens. As part of this deal, Germany's previous commitment to phase out coal power would be brought forward by eight years, from 2038 to 2030.

During the first half of the 2020s, many plants voluntarily went offline in the north, west and south of the country. As renewables continued to surge in capacity, forced closures occurred in the latter part of the decade. The phase out had commenced in western Germany, to soften the impact in the economically poorer eastern side of the country.

By 2030, the final plant shutdown has occurred. More than 80% of Germany's electricity is now generated by renewables. As part of this transition, it is now compulsory for solar energy to be included on the roofs of all new commercial buildings, while each of the country's 16 states must provide at least 2% of their land area for wind power. Around 15 million of Germany's cars are now electric, as the European Union nears its target of phasing out new cars with internal combustion engines by 2035. Germany continues to make progress in reducing greenhouse gas emissions, on its way to net zero by 2045.*

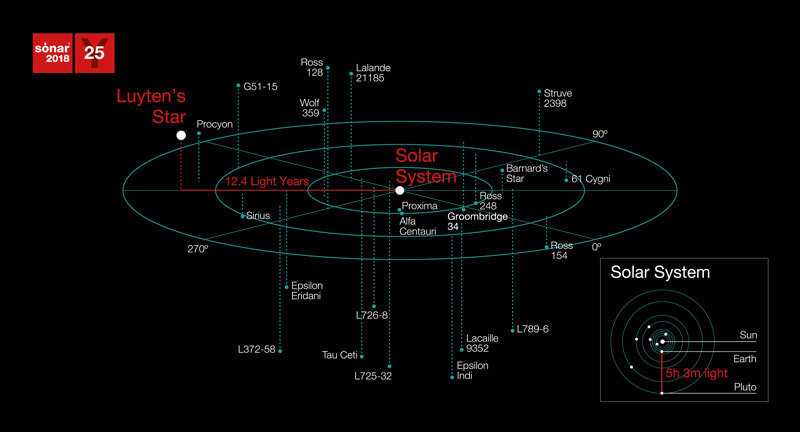

An interstellar message arrives at Luyten's Star

Luyten's Star (GJ 273) is a red dwarf located about 12.4 light-years from Earth. Despite its relatively close proximity, it has a visual magnitude of only 9.9, making it too faint to be seen with the naked eye. It was named after Willem Luyten, who, in collaboration with Edwin G. Ebbighausen, first determined its high proper motion in 1935. Luyten's star is one-quarter the mass of the Sun and has 35% of its radius.

In March 2017, two planets were discovered orbiting Luyten's Star. The outer planet, GJ 273b, was a "Super Earth" with 2.9 Earth masses and found to be lying in the habitable zone, with potential for liquid water on the surface. The inner planet, GJ 273c, had 1.2 Earth masses, but orbited much closer, with an orbital period of only 4.7 days.

In October 2017, a project known as "Sónar Calling GJ 273b" was initiated. This would send music through deep space in the direction of Luyten's Star in an attempt to communicate with extraterrestrial intelligence. The project – organised by Messaging Extraterrestrial Intelligence (METI) and Sónar (a music festival in Barcelona, Spain) – beamed a series of radio signals from a radar antenna at Ramfjordmoen, Norway. The first transmissions were sent on 16th, 17th and 18th October, with a second batch in April 2018.

This became the first radio message ever sent to a potentially habitable exoplanet. The message included 33 music pieces of 10 seconds each, by artists including Autechre, Jean Michel Jarre, Kate Tempest, Kode 9, Modeselektor and Richie Hawtin. Also included were scientific and mathematical tutorials sent in binary code, designed to be understandable by extraterrestrials; a recording of an unborn baby girl's heartbeat; along with poetry and political statements about humans.

Due to the lag from light speed over a distance of 70 trillion miles, the earliest possible date for a response to arrive back would be 2042.*

Credit: Sonar

FIFA World Cup 2030

The FIFA World Cup is held from 8th June–21st July 2030. This tournament is especially significant as it marks the centenary of the first ever World Cup – held in July 1930. Back then, only 13 teams competed, with hosts Uruguay emerging victorious in the final, defeating Argentina with a score of 4–2.

In 2030, a total of 48 teams are playing.* For the first time, three countries from two continents are hosting the competition: Morocco, Portugal, and Spain. Additionally, Argentina, Paraguay, and Uruguay are part of the hosting to commemorate the 100th anniversary. Alongside a special centenary celebration, the first game is played at the Estadio Centenario stadium in Montevideo, Uruguay, where the 1930 final took place. The second and third games are played in Argentina and Paraguay, respectively. The rest of the games and the opening ceremony are held in Morocco, Portugal, and Spain.

The 2030 World Cup, while undoubtedly a historic and much-celebrated event, faces logistical challenges due to its geographic spread. It has caused a backlash from fans, football officials, and environmental groups, because of the large distance between Europe and South America. This requires considerable plane travel, increases the carbon footprint of FIFA, and means that players have only a short amount of rest after travelling back to the main hosts in Iberia and Morocco.

Estadio Centenario in Montevideo, Uruguay. Credit: Marcelo Campi, CC BY-SA 2.0, via Wikimedia Commons

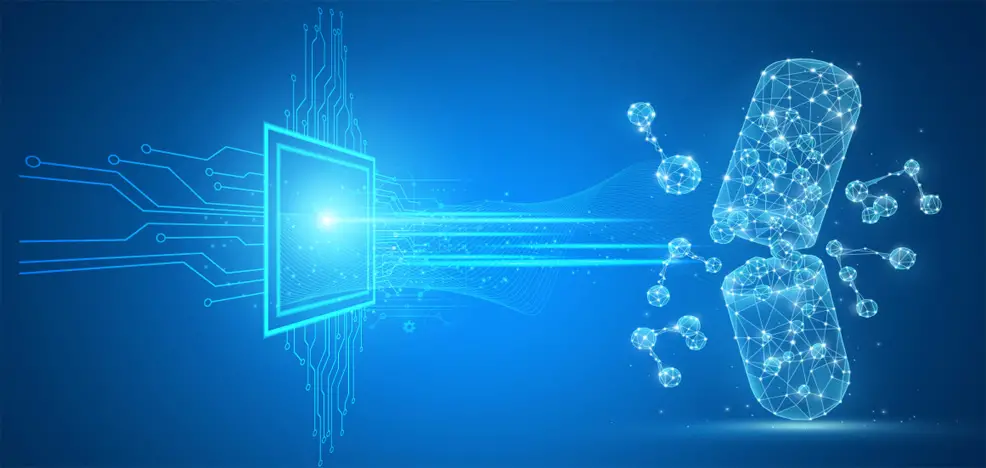

AI-designed antibiotics are available on prescription

During the late 2010s and early 2020s, artificial intelligence (AI) became an increasingly powerful tool for medical research – demonstrating potential in expediting the drug discovery process. Deep learning algorithms grew rapidly in capability, analysing huge datasets to predict the likely effectiveness of millions of chemical compounds. This began to significantly reduce both the time and costs involved in the initial stages of research.

Antibiotic resistance had emerged as a serious and worsening threat to public health. Absent new treatments against bacteria and "superbugs", the world faced a more than ten-fold increase in deaths from these infections, potentially surpassing cancer to reach 10 million a year by 2050.

In 2020, a team at the Massachusetts Institute of Technology (MIT) used an in silico deep learning approach to identify a powerful new antibiotic compound, named Halicin (after the fictional AI Hal from 2001: A Space Odyssey). The algorithm, trained on a dataset of about 2,500 molecules, picked out those with greatest potential to kill bacteria using different mechanisms than existing drugs. In laboratory tests, the drug killed many of the world's most problematic disease-causing bacteria, including some strains resistant to all known treatments. It also cleared infections in two different mouse models. Halicin's unusual mechanism of action involved disrupting a bacterial cell's ability to maintain a normal electrochemical membrane gradient, thus inhibiting metabolism and resulting in cell death.

In 2023, researchers from MIT, in collaboration with McMaster University, used AI to develop another new compound. This time, the training dataset involved 7,500 molecules, triple the number of Halicin. The resulting drug, called Abaucin, showed effectiveness against Acinetobacter baumannii, one of three superbugs identified by the World Health Organization as a critical threat to humanity. Its mode of action involved inhibiting lipoprotein transport. Researchers believed that its precision would make it harder for drug-resistant bacteria to emerge, and could lead to fewer side-effects.

Further testing in the laboratory led to even more potent versions of both Halicin and Abaucin, followed by clinical trials. These and other drugs began to emerge as a promising solution to antibiotic resistance. The first generation of AI-designed antibiotics become available on prescription by 2030.*

Depression is the number one global disease burden

When measured by years of life lost, depression has now overtaken heart disease to become the leading global disease burden.* This includes both years lived in a state of poor health and years lost due to premature death. Principle causes of depression include debt worries, unemployment, crime, violence (especially family violence), war, environmental degradation and disasters. The on-going economic stagnation around the world is a major contributing factor. However, progress is being made with destigmatising mental illness.

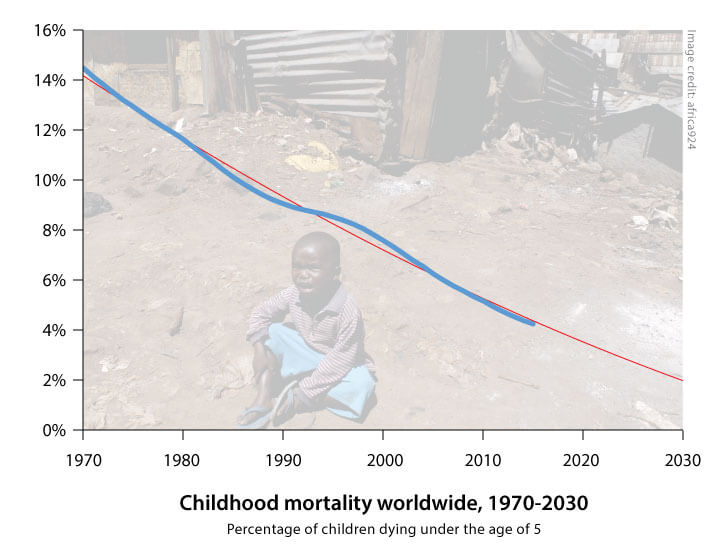

Child mortality is approaching 2% globally

Childhood mortality, defined as the number of children dying under the age of five, was a major issue during the late 20th century. In 1970, more than 14% of children worldwide never saw their 5th birthday, while in Africa the figure was even higher at over 24%. The gap between rich and poor nations was staggering, with a mortality rate of only 24 per 1,000 live births in the most industrialised countries, an order of magnitude lower.*

Improvements in medicine, education, economic opportunity and living standards led to a fall in child deaths over subsequent decades. More and more children were being saved by low-tech, cost-effective, evidence-based measures. These included vaccines, antibiotics, micronutrient supplementation, insecticide-treated bed nets, improved family care and breastfeeding practices, and oral rehydration therapy. The empowerment of women, the removal of social and financial barriers to accessing basic services, new innovations that made the supply of critical services more available to the poor and increasing local accountability were policy interventions that reduced mortality and improved equity.

The U.N.'s Millennium Development Goals included the ambitious target of reducing by two-thirds (between 1990 and 2015) the number of children dying under age five. While this goal failed to be met in time, the progress achieved was still significant – a drop from 92 to 43 deaths per 1,000 live births. Public, private and non-profit organisations, keen to build on their experience and ensure the continuation of this trend, made childhood survival a focus of the new sustainable development agenda for 2030. A new objective was set, which aimed to lower the under-five mortality figure to less than 25 per 1,000 live births worldwide.*

With ongoing improvements in public health and education – aided by widespread access to the Internet in developing regions* – this new goal was largely met, with further declines in childhood mortality from 2015 to 2030. Although some regions in Africa still have unacceptably high rates, the overall worldwide figure is around 2% by 2030.*

One recent development now having a major impact is the mass application of gene drives to control mosquito populations, greatly reducing the number of malaria cases.* Huge advances have also been made in the prevention and treatment of HIV, which is no longer the death sentence it used to be. Some diseases have been eradicated by now including polio, Guinea worm, elephantiasis, river blindness, and blinding trachoma.*

However, the progress achieved in recent decades is now threatened by the worsening problems of climate change and other environmental issues, along with antibiotic resistance.* Even discounting these emerging threats, it is simply impractical and impossible to prevent every childhood death with current levels of technology and surveillance. As such, childhood mortality begins to taper off – not reaching zero until much further into the future.

The Muslim population has increased significantly

By 2030, the Muslim share of the global population has reached 26.4%. This compares with 19.1% in 1990.* Countries which have seen the largest growth rates include Ireland (190.7%), Canada (183.1%), Finland (150%), Norway (149.3%), New Zealand (146.3%) the United States (139.5%) and Sweden (120.2%). Those which have experienced the biggest falls include Lithuania (-33.3%), Moldova (-13.3%), Belarus (-10.5%), Japan (-7.6%), Guyana (-7.3%), Poland (-5.0%) and Hungary (-4.0%).

A number of factors have driven this trend. Firstly, Muslims have higher fertility rates (more children per woman) than non-Muslims. Secondly, a larger share of the Muslim population has entered – or is entering – the prime reproductive years (ages 15-29). Thirdly, health and economic gains in Muslim-majority countries have resulted in greater-than-average declines in child and infant mortality rates, with life expectancy improving faster too.

Despite an increasing share of the population, the overall rate of growth for Muslims has begun to slow when compared with earlier decades. Later this century, both Muslim and non-Muslim numbers will approach a plateau as the global population stabilises.* The spread of democracy* and improved access to education* are emerging as major factors in the slowing fertility rates (though Islam has yet to undergo the sort of renaissance and reformation that Christianity went through).

Sunni Muslims continue to make up the overwhelming majority (90%) of Muslims in 2030. The portion of the world's Muslims who are Shia has declined slightly, mainly because of relatively low fertility in Iran, where more than a third of the world's Shia Muslims live.

Orbital space junk is becoming a major problem for space flight

Space junk – debris left in orbit from human activities – has been steadily building in low-Earth orbit for more than 70 years. It is made up of everything from spent rocket stages, to defunct satellites, to debris left over from accidental collisions. The size of space junk can reach up to several metres, but is most often miniscule particles such as metal shavings and paint flecks. Despite their small size, such pieces of debris often sustain speeds of 30,000 mph – easily fast enough to deal significant damage to a spacecraft. Satellites, rockets and space stations, as well as astronauts conducting spacewalks, have all had to cope with the increasing damage caused by collisions with these particles.

One of the biggest issues with space junk is the fact that it grows exponentially. This trend, along with the increasing number of countries entering space, has made orbital collisions happen almost regularly in recent years. The newest space-faring nations have been particularly affected.

Events similar to the 2009 collision of the US Iridium and Russian Kosmos satellites have raised fears of the so-called Kessler Syndrome. This scenario is where space junk reaches a critical mass, triggering a chain reaction of collisions until virtually every satellite and man-made object in an orbital band has been reduced to debris. Such an event could destroy the global economy and render future space travel almost impossible.

By 2030, the amount of space junk in orbit has tripled, compared to 2011.* Countless millions of fragments can now be found at various levels of orbit. A new generation of shielding for spacecraft and rockets is being developed, along with tougher and more durable space suits for astronauts. This includes the use of "self-healing" nanotechnology materials, though expenses are too high to outfit everything.

Larger chunks of debris have also been impacting on Earth itself more frequently. Though most land in the ocean (since the planet's surface is 70% covered by water), a few crash on land, necessitating early warning systems for people in the affected areas.

Increased regulation has begun to mitigate the growth of space debris, while better shielding and repair technology has reduced the frequency of damage. Increased computing power and tracking systems are also helping to predict the path of debris and instruct spacecraft to avoid the most dangerous areas. Options to physically move debris are also being deployed – including nets and harpoons fired from small satellites, along with ground-based lasers that can push junk into decaying orbits so it burns up in the atmosphere. Despite this, space junk remains an expensive problem for now.

The UK space industry has quadrupled in size

In 2010, the UK government established the United Kingdom Space Agency (UKSA). This replaced the British National Space Centre and took over responsibility for key budgets and policies on space exploration – representing the country in all negotiations on space matters and bringing together all civil space activities under one single management.

By 2014, the UK's thriving space sector was contributing over £9 billion ($15.2 billion) to the economy each year and directly employing 29,000 people, with an average growth rate of almost 7.5%. Recognising its strong potential, the government backed plans for a fourfold expansion of the industry.* New legal frameworks allowed a spaceport to be established in the UK – triggering growth of space tourism, launch services and other hi-tech companies.

By 2030, the UK has become a major player in the space industry, with a global market share of 10%. Having quadrupled in size, its space industry now contributes £40 billion ($67 billion) a year to the economy and has generated over 100,000 new high-skilled jobs.* The UK has significantly increased its leadership and influence in crucial areas like satellite communications, Earth observation, disaster relief and climate change monitoring. The growth of space-based products and services means the UK is now among the first 100% broadband-enabled countries in the world.* This has also reduced the costs of delivering government services to all citizens, regardless of their location.

The Lockheed Martin SR-72 enters service

The SR-72 is an unmanned, hypersonic aircraft intended for intelligence, surveillance and reconnaissance. Developed by Lockheed Martin, it is the long-awaited successor to the SR-71 Blackbird that was retired in 1998. The plane combines both a traditional turbine and a scramjet to achieve speeds of Mach 6.0, making it twice as fast as the SR-71 and capable of crossing the Atlantic Ocean in under an hour. A scaled demonstrator was built and tested in 2018. This was followed by a full-size demonstrator in 2023 and then entry into service by 2030.* The SR-72 is similar in size to the SR-71, at approximately 100 ft (30 m) long. With an operational altitude of 80,000 feet (24,300 metres), combined with its speed, the SR-72 is almost impossible to shoot down.

Credit: Lockheed Martin

Half of America's shopping malls have closed

For much of the 20th century, shopping malls were an intrinsic part of American culture. At their peak in the mid-1990s, the country was building 140 new shopping malls every year. But from the early 2000s onward, underperforming and vacant malls – known as "greyfield" and "dead mall" estates – became an emerging problem. In 2007, a year before the Great Recession, no new malls were built in America, for the first time in half a century. Only a single new mall, City Creek Center Mall in Salt Lake City, was built between 2007 and 2012. The economic health of surviving malls continued to decline, with high vacancy rates creating an oversupply glut.*

A number of changes had occurred in shopping and driving habits. More and more people were living in cities, with fewer interested in driving and people in general spending less than before. Tech-savvy Millennials, in particular, had embraced new ways of living. The Internet had made it far easier to identify the cheapest products and to order items without having to be physically there in person.

In earlier decades, this had mostly affected digital goods such as music, books and videos, which could be obtained in a matter of seconds – but even clothing was eventually possible to download, thanks to the proliferation of 3D printing in the home.* Many of these abandoned malls are now being converted to other uses, such as housing.

The metaverse has reached $5 trillion in size

The metaverse is a network of online 3D worlds, accessed via the use of virtual reality (VR) and augmented reality (AR) headsets. The 2003 virtual world platform Second Life is often described as the first metaverse, as it incorporated aspects of social media into a persistent three-dimensional world with the user represented as an avatar.

Over the years, the metaverse evolved and grew to attract many more users, becoming essentially the next major iteration of the Internet. By 2021, it had reached an inflection point, with global investment of $57 billion, a figure that more than doubled to over $120 billion just a year later.

By 2030, the metaverse has reached a market size of $5 trillion and continues to grow.* The biggest revenue generators are e-commerce ($2.6 trillion), ahead of sectors such as virtual learning ($270 billion), advertising ($206 billion), and gaming ($125 billion). Almost all the major retail brands now have their own virtual store or shop front, where many items can be browsed and interacted with, along with a sizeable number of small and medium-sized enterprises (SMEs).

Education, training, conferences, and business meetings in VR are now commonplace. Health and fitness is another major area of use, with treadmill walkers and runners able to, for example, move across the simulated surface of other worlds if they choose, or myriad cities and locations on Earth, perhaps even including different periods of history. Meanwhile, advertising is more interactive (and some would say intrusive) than ever before.

Gaming in VR had already been an option in previous decades, though expensive and with a somewhat limited selection of games. This situation has now improved greatly, with a vast range of titles on offer, alongside greater opportunities for meeting and connecting with fellow players. Many of the environments featured in these virtual experiences are created and maintained by proto-artificial general intelligences (AGIs), which can auto-generate objects, content, and even whole storylines without a human programmer. However, individual and community-generated worlds are just as popular.

Like the explosion in sales of smartphones during the 2010s, the metaverse has entered the mainstream through a combination of technological advances and rapidly falling hardware costs. VR and AR headsets are now cheap and accessible to billions of people, often providing up to 8K resolution per eye.

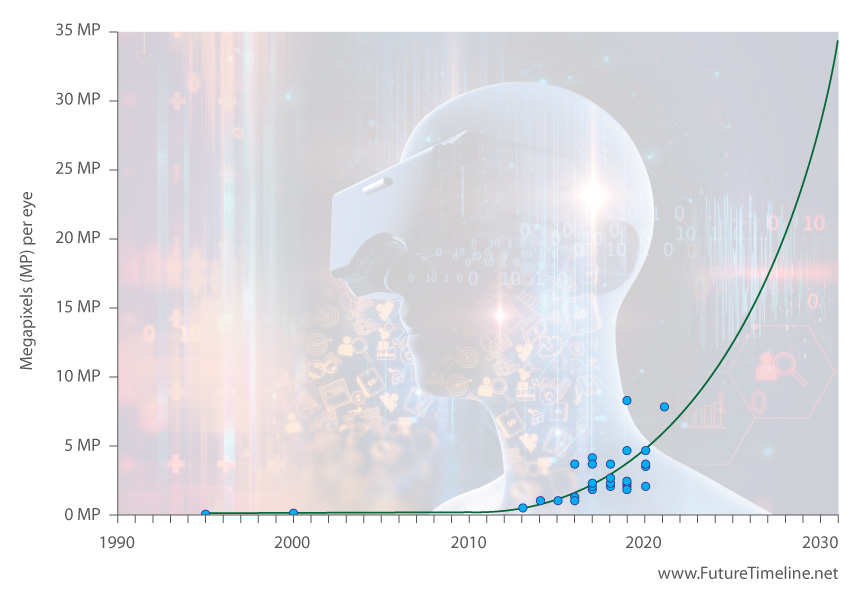

8K VR headsets are common

8K displays (amounting to 33 MP of resolution per eye) are a fairly standard feature of virtual reality (VR) in 2030. These offer quadruple the pixel count of the best consumer VR products from a decade earlier.

Following a long period with little or no activity, the VR industry saw a major revival from around 2015 onwards. A prototype of the Oculus Rift and its subsequent commercial release led to dozens of competitors within a few years, including models with better resolution and fields of view (FOV).

Having initially been a somewhat expensive and niche form of entertainment, VR declined greatly in cost during the 2020s. The COVID-19 pandemic accelerated its mainstream adoption.

By 2030, the quality of VR has improved exponentially.* The latest screens now provide breathtaking detail and realism, ultra-low latency, and wide FOV, while a variety of new features are combining to enhance the level of immersion and interactivity still further. For example, most headsets now include as standard the option for a brain-computer interface (BCI) to record users' electrical signals, enabling actions to be directed by merely thinking about them.* Such technology had already begun to emerge some years previously, but has now improved greatly in terms of speed, accuracy and ubiquity.

Non-invasive sensors placed on the scalp are by far the preferred choice for mainstream BCI use. However, more advanced options for invasive BCIs have now begun to emerge, as the technology shifts from purely clinical uses (such as treating paralysis) and into business, leisure and entertainment.* Although still at a niche and experimental phase of development, early adopters willing to undergo surgery and have electrodes touching the surface of their brain can use bidirectional links for both reading and writing information to their neocortex.

In VR gaming, these more invasive BCIs can increase the level of immersion, tricking the senses in ways that bring a player closer to the action. New visual, auditory and tactile sensations are made possible by stimulating both the motor and visual cortex.* These effects are rather limited at this stage and exploited by only the most hardcore gamers – but provide more lifelike ways of interacting with simulated people, objects and environments.

This decade sees much progress with BCI technology as the number of electrodes used in the implants grows by leaps and bounds, enabling larger and more complex brain patterns to be recorded and decoded. In addition to gaming, BCIs gain popularity from the enhancement of wellness functions, such as for guided meditation and the improvement of sleep quality. At the same time, ethical issues are emerging over consent, privacy, identity, and agency, especially when BCIs are combined with AI.

100 terabyte HDDs reach consumer level

As of 2020, consumer-level hard disk drives (HDDs) featured capacities up to a maximum of about 18 terabytes (TB). While solid state drives (SSDs) had been available with greater storage sizes for enterprise-grade users, as well as faster transfer speeds, traditional HDDs remained a relevant and attractive alternative thanks to their much lower costs.

With perpendicular magnetic recording (PMR) approaching its limits, even boosted with two-dimensional magnetic recording (TDMR), the capacity of HDDs had seen a reduced rate of growth in the 2010s. However, an innovative technique known as heat assisted magnetic recording (HAMR) allowed data to be written to much smaller spaces. This provided a major acceleration in storage capacities during the 2020s, creating a new paradigm for HDD with successive generations now able to jump in larger steps of 4TB, 6TB, or even 10TB at a time.

Although data densities continued to grow rapidly, transfer speeds became an increasingly important consideration. Vast storage volumes needed to be accessible at rates commensurate with their size. To boost the IOPS per TB performance of HDDs, multi-actuator drives began to emerge. For example, using two actuators instead of one could almost double throughput.

With 50TB consumer-level HDDs on sale in 2026, hard drive makers continued to innovate and find ways of making data both smaller and faster, driven by the world's ever-growing demand for storage. By 2030, consumer PC users have access to 100TB HDDs, quintupling the figure of a decade earlier.*

Completion of Saudi Vision 2030

This year sees the realisation of a long-term strategic framework by Saudi Arabia, intended to reduce the country's dependence on oil, diversify its economy and develop public service sectors such as education, health, infrastructure, recreation, and tourism. The key goals of "Saudi Vision 2030" include reinforcing economic and investment activities, increasing non-oil international trade, and promoting a softer and more secular image of the Kingdom.

Crown Prince Mohammad bin Salman first announced the vision in 2016. The Saudi Council of Economic and Development Affairs (CEDA) then began identifying and monitoring the steps crucial for implementation by 2030. The CEDA established 13 programs, called Vision Realisation Programs, covering the different areas such as energy, finance, housing, quality of life, and transport.

The Kingdom's goals for 2030* included:

• To move Saudi Arabia from the 19th largest economy in the world into the top 15

• To increase non-oil government revenue from SAR 163 billion (US$43.5bn) to at least SAR 1 trillion (US$267bn)

• To increase women's participation in the workforce from 22% to 30%

• To increase small and medium-sized enterprise (SME) contribution to GDP from 20% to 35%

• To increase the private sector's contribution from 40% to 65% of GDP

• To increase household spending on cultural and entertainment activities inside the Kingdom from the current level of 2.9% to 6%

• To increase the ratio of individuals exercising at least once a week from 13% of the population to 40%

• To increase the average life expectancy from 74 years to 80 years

• To have three Saudi cities be recognised in the top 1% of cities in the world

• To more than double the number of Saudi heritage sites registered with UNESCO

Alongside these socioeconomic measures, proposals for various large-scale projects began to emerge. The developers intended to both improve the country's domestic transport and infrastructure, and to showcase Saudi Arabia to the world as a destination for leisure and investment. They included new retail, hotel, entertainment, cultural and residential megaprojects, as well as industrial, logistics, and corporate facilities.

By far the costliest and most prominent took the form of a $500bn smart city in the northwestern corner of the country. Known as Neom – a portmanteau of the Greek word neos, meaning "new," and mustaqbal, the Arabic word for "future" – this would operate independently from the existing governmental framework, with its own tax and labour laws and an autonomous judicial system. According to its developers, Neom would be "a hub for innovation where global business and emerging players can research, incubate and commercialise ground-breaking technologies to accelerate human progress." In 2021, Saudi Crown Prince Mohammed bin Salman unveiled the first major development within the Neom zone, a planned city named "The Line".

The Line (as its name suggested) would consist of a long, linear development stretching for over 170 km (105 miles). This huge, continuous urban belt would enable the Red Sea coastline to the west to be linked with mountains and upper valleys in the east. The developers intended for it to redefine the traditional layout of a city by emphasising a strong focus on nature, liveability, health and community connections.

The Line's masterplan called for building "around nature, rather than over it" and specified large areas of land to be preserved for conservation. The need for cars and other vehicles would be eliminated, with all essential daily needs provided within a five-minute walk for every resident. The project would include a system of ultra-high-speed mass transit running its complete length, with businesses and communities also hyper-connected through a digital framework incorporating artificial intelligence (AI) and robotics. The AI would monitor the city, using predictive and data models to optimise daily life for citizens in various ways. The Line would be self-sufficient with locally grown food, powered by 100% clean energy, home to abundant parks and other green spaces, and with sustainable and regenerative practices adopted throughout the city.

The developers completed phase one of The Line by 2025. Following subsequent expansion, its population has reached over a million by 2030* and the city has now boosted Saudi Arabia's economy by SAR 180 billion (US$48bn).*

In addition to advanced technologies, The Line boasts other features. Its location makes it favourable in terms of weather and climate conditions, being one of the few areas in Saudi Arabia to experience snowfall in winter, as well as benefitting from the ocean breeze and aquatic recreation opportunities. Temperatures are 10°C lower than the average for the Arabian Peninsula. As a further geographic advantage, it can also be reached by more than 40% of the world's population in less than a four-hour flight, while 13% of global trade already flows through the Red Sea.

The Line serves as a model for future developments within the Neom zone, while also inspiring other large-scale infrastructure projects both in Saudi Arabia and around the world.

The High-Luminosity Large Hadron Collider enters operation

The High-Luminosity Large Hadron Collider (HL-LHC) enters operation at CERN, marking the most extensive upgrade in the history of the Large Hadron Collider. This new configuration increases the machine's luminosity by a factor of five to seven. Luminosity measures how many particle collisions occur in a given time: higher luminosity means more collisions, more data, and a greater chance of spotting rare phenomena. Over its lifetime, the HL-LHC collects around ten times more data than the original LHC.

This dramatic increase allows physicists to study known particles with far greater precision and to search more effectively for signs of physics beyond the Standard Model. The upgraded collider produces at least 15 million Higgs bosons per year, compared with around 1.2 million produced during the LHC's first runs in 2011–2012, enabling detailed measurements of its properties that were previously impossible.

CERN had originally aimed to bring the HL-LHC online in the mid-2020s, but the schedule shifted due to the scale and complexity of the required upgrades. Although some early components were installed during the LHC's second long shutdown (2018–2022), most of the new equipment and major experiment upgrades were carried out during Long Shutdown 3, which took place between 2026 and 2029. Following installation and commissioning, the start-up of HL-LHC physics operations (Run 4) occurs in 2030.*

With data collection now under way, researchers can probe the Higgs boson with unprecedented accuracy and place far tighter constraints on new theories, helping to guide the future direction of high-energy physics beyond the LHC era.

© CERN

The entire ocean floor is mapped

While humans had long ago conquered the Earth's land masses, the deep oceans lay mostly unexplored. In the early years of the 21st century, only 20% of the global ocean floor had been mapped in detail. Even the surfaces of the Moon, Mars and other planets were better understood. With data now becoming as important a commodity as oil, researchers set out to acquire knowledge of the remaining 80% and uncover a potential treasure trove of hidden information.

Seabed 2030 – a collaborative project between the Nippon Foundation of Japan and the General Bathymetric Chart of the Oceans (GEBCO) – aimed to bring together all available bathymetric data to produce a definitive map of the world ocean floor by 2030.

As part of the effort, fleets of automated vessels capable of trans-oceanic journeys would cover millions of square miles, carrying with them an array of sensors and other technology. These uncrewed ships, monitored by a control centre in Southampton, UK, would deploy tethered robots to inspect points of interest all the way down to the floor of the ocean, thousands of metres below the surface.

By 2030, the project is largely complete.** The maps provide a wealth of new information on the global seabed, revealing its geology in unprecedented detail and showing the location of ecological hotspots, as well as many shipwrecks, crashed planes, archaeological artefacts and other unique and interesting sites. Commercial applications include the inspection of pipelines, and surveying of bed conditions for telecoms cables, offshore wind farms and so on. However, concerns are raised over the potential impact of new undersea mining technology, the opportunities for which are now greatly increased.

« 2029 |

⇡ Back to top ⇡ |

2031 » |

If you enjoy our content, please consider sharing it:

References

1 NASA's Europa Clipper, NASA:

https://europa.nasa.gov/

Accessed 25th July 2021.

2 Europa Clipper, Wikipedia:

https://en.wikipedia.org/wiki/Europa_Clipper

Accessed 25th July 2021.

3 Temperatures, Climate Action Tracker:

https://climateactiontracker.org/global/temperatures/

Accessed 7th August 2022.

4 Economic losses from weather-related events, 1970-2060, Future Timeline – Data & trends:

https://www.futuretimeline.net/data-trends/22-global-warming-future-insurance-trend.htm

Accessed 7th August 2022.

5 Emissions Gap Report 2021, UNEP:

https://www.unep.org/resources/emissions-gap-report-2021

Accessed 7th August 2022.

6 State of Climate Action 2021, Climate Action Tracker:

https://climateactiontracker.org/documents/989/state_climate_action_2021.pdf

Accessed 7th August 2022.

7 Climeworks takes another major step on its road to building gigaton DAC capacity, Climeworks:

https://newsroom.climeworks.com/196012-climeworks-takes-another-major-step-on-its-road-to-building-gigaton-dac-capacity

Accessed 7th August 2022.

8 The Ministry for the Future, by Kim Stanley Robinson.

9 China is building up its nuclear arsenal faster than predicted, U.S. report says, NBC News:

https://www.nbcnews.com/news/world/china-building-nuclear-weapons-arsenal-faster-rcna121349

Accessed 26th November 2023.

10 China officially plans to move ahead with super-heavy Long March 9 rocket, ARS Technica:

https://arstechnica.com/science/2021/02/china-officially-plans-to-move-ahead-with-super-heavy-long-march-9-rocket/

Accessed 5th March 2021.

11 China's super-heavy-lift rocket awaits state approval, to serve in lunar manned mission around 2030: experts, Global Times:

https://www.globaltimes.cn/page/202103/1216913.shtml

Accessed 5th March 2021.

12 As 5G looms, China's already looking at 6G development, CNET:

https://www.cnet.com/news/as-5g-looms-chinas-already-looking-at-6g-development/

Accessed 3rd April 2019.

13 FCC Opens Spectrum Horizons for New Services & Technologies, FCC:

https://www.fcc.gov/document/fcc-opens-spectrum-horizons-new-services-technologies

Accessed 3rd April 2019.

14 6G will achieve terabits-per-second speeds, Network World:

https://www.networkworld.com/article/3305359/6g-will-achieve-terabits-per-second-speeds.html

Accessed 3rd April 2019.

15 The Truth About Terahertz, IEEE Spectrum:

https://spectrum.ieee.org/aerospace/military/the-truth-about-terahertz

Accessed 3rd April 2019.

16 China plans 6G mobile with terabit speeds by 2030, Future Timeline Blog:

https://www.futuretimeline.net/blog/2018/11/22.htm

Accessed 3rd April 2019.

17 Energy crisis, Wikipedia:

https://en.wikipedia.org/wiki/Energy_crisis

Accessed 20th December 2015.

18 "GRID 2030" A NATIONAL VISION FOR ELECTRICITY'S SECOND 100 YEARS, U.S. Department of Energy:

http://energy.gov/oe/downloads/grid-2030-national-vision-electricity-s-second-100-years

Accessed 26th December 2012.

19 "'We'll learn an awful lot from those projects,' said DeBlasio. 'This is the first big investment in smart grid, but it will take 20 years to deploy the technology and along the way we will create a body of standards for it,' he added."

See IEEE kicks off smart grid effort in June, EE Times:

http://www.eetimes.com/electronics-news/4082845/IEEE-kicks-off-smart-grid-effort-in-June

Accessed 26th December 2012.

20 Smart Grid Could Shave U.S. Emissions by 2030, Scientific American:

http://www.scientificamerican.com/article.cfm?id=smar-meter-grid-electricity-sensor-energy-savings

Accessed 26th December 2012.

21 Germany's Scholz seals deal to end Merkel era, BBC News:

https://www.bbc.co.uk/news/world-europe-59399702

Accessed 19th December 2021.

22 Sónar celebrates 25 years of the festival by contacting intelligent extraterrestial life, Sonar:

http://intranet.sonar.es/mailing/1128/en.html

Accessed 30th November 2017.

23 FIFA World Cup 2030™: Morocco, Portugal and Spain joint bid is sole candidate to host, FIFA:

https://www.fifa.com/fifaplus/en/tournaments/mens/worldcup/articles/world-cup-2030-spain-portugal-morocco-host-centenary-argentina-uruguay-paraguay

Accessed 21st May 2024.

24 New superbug-killing antibiotic discovered using AI, BBC News:

https://www.bbc.co.uk/news/health-65709834

Accessed 6th June 2023.

25 Global and nationwide costs: statistics, Mental Health Foundation:

https://www.mentalhealth.org.uk/explore-mental-health/statistics/global-nationwide-costs-statistics

Accessed 6th June 2023.

26 Child mortality estimates, UNICEF:

http://www.childmortality.org/

Accessed 24th September 2016.

27 Goal 3: Ensure healthy lives and promote well-being for all at all ages, UN Sustainable Development Goals:

http://www.un.org/sustainabledevelopment/health/

Accessed 24th September 2016.

28 See 2020.

29 Child deaths will go down, and more diseases will be wiped out, 2015 Gates Annual Letter:

https://www.gatesnotes.com/2015-annual-letter?page=1&lang=en&WT.mc_id=01_30_2015_AL2015-BG_TW_ChildDeathsInfo_HealthHeader_11

Accessed 24th September 2016.

30 See 2029.

31 Child deaths will go down, and more diseases will be wiped out, 2015 Gates Annual Letter:

https://www.gatesnotes.com/2015-annual-letter?page=1&lang=en&WT.mc_id=01_30_2015_AL2015-BG_TW_ChildDeathsInfo_HealthHeader_11

Accessed 24th September 2016.

32 Superbugs could kill 10 million people a year by 2050, Future Timeline Blog:

https://www.futuretimeline.net/blog/2016/05/19.htm

Accessed 24th September 2016.

33 The Future of the Global Muslim Population, Pew Research:

http://www.pewforum.org/2011/01/27/the-future-of-the-global-muslim-population/

Accessed 11th October 2013.

34 See 2055.

35 See 2055.

36 See 2060.

37 Ugly Truth of Space Junk: Orbital Debris Problem to Triple by 2030, Space.com:

http://www.space.com/11607-space-junk-rising-orbital-debris-levels-2030.html

Accessed 22nd December 2011.

38 Government backs UK launch site plan for space tourism, BBC:

http://www.bbc.co.uk/news/science-environment-27222077

Accessed 2nd May 2014.

39 Space Innovation and Growth Strategy 2014-2030, Space IGS:

http://www.bis.gov.uk/assets/ukspaceagency/docs-2013/igs-action-plan.pdf

Accessed 2nd May 2014.

40 A UK Space Innovation and Growth Strategy 2010 to 2030, Space IGS:

http://www.bis.gov.uk/assets/ukspaceagency/docs/igs/space-igs-exec-summary-and-recomm.pdf

Accessed 2nd May 2014.

41 Hypersonic Successor to Legendary SR-71 Blackbird Spy Plane Unveiled, Wired:

http://www.wired.com/autopia/2013/11/lockheed-martin-sr-72/

Accessed 6th November 2013.

42 Dead malls: Half of America's shopping centres predicted to close by 2030, ABC:

http://www.abc.net.au/news/2015-01-28/the-decline-of-american-shopping-malls/6050956

Accessed 25th March 2015.

43 I 3D Printed My Clothes For A Trip To NYC!! ARE THEY WEARABLE??, YouTube:

https://www.youtube.com/watch?v=54kjB3wOOtQ

Accessed 27th December 2023.

44 McKinsey & Co.: Metaverse could reach $5 trillion in value by 2030, VentureBeat:

https://venturebeat.com/2022/06/15/mckinsey-co-metaverse-could-reach-5-trillion-in-value-by-2030/

Accessed 20th July 2022.

45 Virtual reality – future trends, Future Timeline – Data and Trends:

https://www.futuretimeline.net/data-trends/19-virtual-reality-future-trends.htm

Accessed 21st April 2021.

46 Welcome to 2030: Are you ready for the internet of the senses?, ZDNet:

https://www.zdnet.com/article/are-you-ready-for-the-internet-of-the-senses/

Accessed 21st April 2021.

47 10 years from now your brain will be connected to your computer, ZDNet:

https://www.zdnet.com/article/10-years-from-now-your-brain-will-be-connected-your-computer/

Accessed 21st April 2021.

48 Gabe Newell on Brain-computer Interfaces: 'We're way closer to The Matrix than people realize', Road to VR:

https://www.roadtovr.com/gabe-newell-brain-computer-interfaces-way-closer-matrix-people-realize/

Accessed 21st April 2021.

49 Seagate: 100TB HDDs Due in 2030, Multi-Actuator Drives to Become Common, Tom's Hardware:

https://www.tomshardware.com/uk/news/seagate-technology-roadmap-2021

Accessed 1st April 2021.

50 Saudi Vision 2030, Official website:

https://www.vision2030.gov.sa/en

Accessed 21st February 2021.

51 Oil-free future: What is Saudi Arabia's Neom project, The Times of India:

https://timesofindia.indiatimes.com/business/international-business/what-is-saudi-arabias-neom-project-key-things-to-know/articleshow/80212855.cms

Accessed 21st February 2021.

52 The Line launch media kit, NEOM:

https://newsroom.neom.com/the-line-launch-media-kit#

Accessed 21st February 2021.

53 High-Luminosity LHC, CERN:

https://home.cern/resources/faqs/high-luminosity-lhc

Accessed 17th January 2026.

54 Ocean Infinity's Unmanned Robot Ship Fleet Will Map Out the Entire Seabed, Interesting Engineering:

https://interestingengineering.com/ocean-infinitys-unmanned-robot-ship-fleet-will-map-out-the-entire-seabed

Accessed 8th March 2020.

55 The Nippon Foundation-GEBCO Seabed 2030 Project, GEBCO:

https://seabed2030.gebco.net/

Accessed 8th March 2020.

![[+]](https://www.futuretimeline.net/images/buttons/expand-symbol.gif)